Chapter 1: Learn how pervasive consumer concerns about data privacy, unethical ad-driven business models, and the imbalance of power in digital interactions highlight the need for trust-building through transparency and regulation.

Chapter 8: Learn how AI’s rapid advancement and widespread adoption present both opportunities and challenges, requiring trust and ethical implementation for responsible deployment. Key concerns include privacy, accountability, transparency, bias, and regulatory adaptation, emphasizing the need for robust governance frameworks, explainable AI, and stakeholder trust to ensure AI’s positive societal impact.

Trust has always been a cornerstone of human interaction, enabling individuals and organizations to navigate uncertainty and collaboration. In the digital age, trust has undergone significant transformations, shaped by emergent technologies and societal dynamics.

This chapter explores the evolution of trust, beginning with consumer trust in interpersonal relationships transitioning to trust in artificial intelligence (AI), and addressing the complex drivers and strategies to engender and maintain trust.

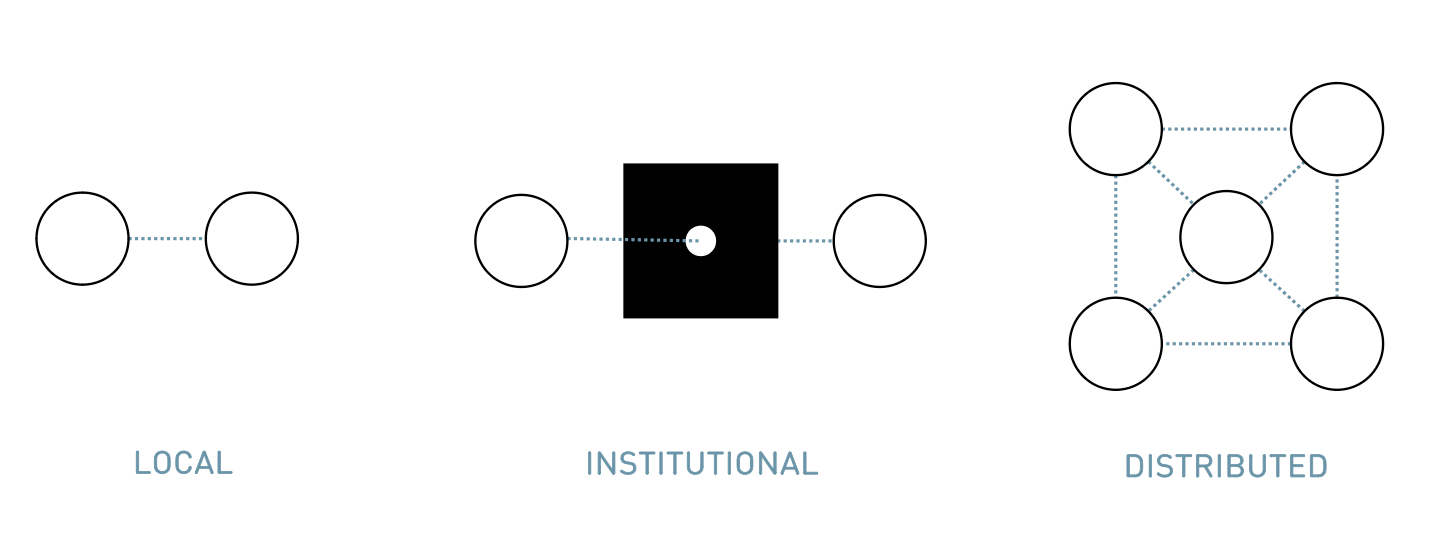

The Evolution of Trust: From Local to Distributed Models

Rachel Botsman, a leading thinker on the concept of trust, outlines its evolution through three major stages:

Local Trust: Historically, trust was built through direct, personal relationships. Communities were small and interactions were face-to-face, enabling individuals to assess trustworthiness based on firsthand experience and shared norms. This form of trust relied heavily on reputation within tightly knit social networks.

Institutional Trust: With the rise of larger societies and organizations, trust shifted to institutions such as banks, governments, and corporations. These entities acted as intermediaries, providing assurances of reliability through standardized processes, regulations, and certifications. This phase allowed for greater scalability but often introduced opaqueness, as individuals placed trust in systems they did not fully understand or control.

Distributed Trust: Today, technology is driving a new era of distributed trust. Platforms like blockchain, peer-to-peer networks, and decentralized systems enable trust to be established without traditional intermediaries. For example, sharing economy platforms like Airbnb and Uber rely on user-generated reviews and algorithmic ratings to foster trust among strangers. Similarly, blockchain technology eliminates the need for central authorities by enabling transparent and secure peer-to-peer transactions.

This evolution highlights a shift from hierarchical, top-down models of trust to more decentralized and participatory frameworks. Distributed trust leverages technology to empower individuals, but it also introduces new complexities, such as algorithmic biases and the challenge of ensuring transparency in automated systems.

Why trust in institutions is collapsing

The collapse of institutional trust represents a complex, multifaceted phenomenon driven by interconnected systemic challenges. The increasing Data Collection Dilemma fundamentally erodes public confidence by exposing widespread surveillance practices compromising individual privacy and autonomy (Zuboff, 2019).

Simultaneously, the Inequality of Accountability reveals a growing perception that powerful institutions and elites operate under different legal and ethical standards compared to ordinary citizens, undermining fundamental principles of social justice and democratic transparency (Piketty, 2020).

Digital technologies have dramatically accelerated the emergence of Segregated Echo Chambers, where polarized information environments reinforce existing beliefs and systematically exclude alternative perspectives, further fragmenting societal trust (Sunstein, 2017). The technological infrastructure of social media platforms actively encourages algorithmic segregation, creating epistemological bubbles that make constructive dialogue increasingly difficult.

The Twilight of Elites and Authority reflects a profound crisis of institutional legitimacy, where traditional hierarchical structures struggle to maintain credibility in an era of instantaneous information exchange and decentralized knowledge production (Nichols, 2017). This systematic delegitimization is fueled by increasing transparency about institutional failures, corruption, and systemic inefficiencies.

These interconnected dynamics create a self-reinforcing cycle of institutional distrust, where reduced confidence leads to decreased institutional effectiveness, which in turn further erodes public trust. The resultant social fragmentation poses significant challenges to democratic governance, collective problem-solving, and social cohesion.

Trust in Online Business: Foundations and Challenges

The advent of e-commerce and digital platforms introduced a new paradigm of trust (Corritore et al., 2003). Traditional cues like physical presence and face-to-face interaction were replaced by virtual interfaces, leading to what researchers term “institutional trust” (McKnight & Chervany, 2001). This type of trust relies on systemic safeguards, such as encryption, certifications, and user reviews, to assure consumers of safety and reliability (Pavlou, 2003).

Despite these measures, challenges persist. The “privacy paradox” encapsulates the tension between users’ willingness to share data for convenience and their concerns about data misuse. Studies indicate that while consumers value personalized experiences, they remain sceptical about how their data is handled. For instance, only a minority of users trust organizations to manage sensitive information responsibly, highlighting the need for greater transparency and accountability in online transactions.

Trust in online businesses is further influenced by brand reputation, user experience, and regulatory frameworks. Companies prioritising ethical practices and investing in customer support are better positioned to earn trust. Conversely, data breaches and opaque policies erode consumer confidence, underscoring the importance of proactive risk management. Historical cases, such as high-profile data breaches involving companies like Equifax or Facebook, illustrate the long-term damage to trust caused by failures in data stewardship. These incidents demonstrate that trust is not only an enabler of business success but also a fragile resource that requires continuous cultivation.

From Interpersonal Dynamics to Technological Interactions

Trust has undergone a significant conceptual transformation from interpersonal relationships to technological contexts. Mayer et al. (1995) originally conceptualized interpersonal trust as a multidimensional construct comprising three key components: ability, integrity, and benevolence. In the interpersonal domain, trust emerges from an individual’s perception of another’s competence (ability), adherence to principles (integrity), and genuine concern for others’ welfare (benevolence).

As technological systems evolved, trust research expanded to automation contexts. Hoff and Bashir (2014) identified a parallel trust framework for human-automation interactions characterized by performance, process, and purpose. Performance relates to the system’s reliability and accuracy, process concerns the transparency and predictability of system operations, and purpose addresses the alignment of automation goals with human expectations.

Lee and Moray (1992) were instrumental in bridging interpersonal and automation trust concepts, highlighting that trust in technological systems follows similar psychological mechanisms to interpersonal trust. The transition demonstrates how fundamental trust principles adapt to emerging technological interfaces. In automation, the transparency, explainability, and perceived fairness of the underlying algorithms and data processing methods become crucial. While “integrity” captures moral alignment in interpersonal contexts, “process” in automation trust emphasizes users’ understanding of the system’s functioning and the ethical or logical considerations behind it.

Trust in Artificial Intelligence: New Horizons

Integrating AI into various sectors, from healthcare to finance, has redefined trust dynamics (Floridi & Cowls, 2019). Unlike online businesses, where human actors are central, trust in AI revolves around trust in systems, algorithms, and data integrity. Three emergent complexity drivers characterize this transition:

Vulnerability and Control: As AI systems become more autonomous, users face heightened concerns about loss of control and unintended consequences (Zerilli et al., 2019). For example, algorithmic biases can lead to discriminatory outcomes, undermining trust in AI systems. Additionally, the opacity of AI decision-making, often referred to as the “black-box problem,” exacerbates these concerns by limiting users’ understanding of how outcomes are derived. This is particularly evident in critical sectors such as healthcare, where opaque AI diagnostics can create unease among both patients and professionals.

Cognitive Heuristics and Biases: Humans often rely on intuitive judgments (System 1 thinking) rather than deliberate analysis (System 2 thinking) when interacting with AI (Kahneman, 2011). This reliance can result in overtrust or distrust, depending on initial impressions or anecdotal experiences. For example, highly anthropomorphic AI interfaces might elicit unwarranted trust, while overly technical presentations might deter engagement. A notable example is the acceptance of self-driving cars, where initial trust in the technology is influenced by user experience, media narratives, and societal attitudes toward risk (Hancock et al., 2020).

Context Sensitivity of Data: Trust in AI is heavily influenced by the context in which data is collected, processed, and utilized. The economic and informational value of data depends on both its content and the context of its application, requiring systems to adapt to these nuances. Misaligned use cases can erode trust, such as when health data intended for medical purposes is repurposed for marketing without consent. This sensitivity highlights the importance of ensuring data practices align with user expectations and ethical standards.

Challenges to human-AI teams

Human–AI teaming refers to a collaborative partnership in which humans and artificial intelligence systems work together toward shared objectives, leveraging each other’s strengths for improved decision-making and problem-solving (Amodei et al., 2016). In such teams, AI can provide rapid data processing, pattern recognition, and predictive insights, while humans bring contextual knowledge, ethical judgment, and creative thinking. Effective human–AI teaming depends on proper alignment of goals, transparent communication of AI processes (interpretability), and ongoing updates to ensure that evolving system behaviours remain faithful to human intentions.

Alignment ensures that humans and AI systems share a common objective, preventing unintended outcomes when the AI optimizes for goals that diverge from human values (Amodei et al., 2016; Russell, 2019). When objectives are misaligned, the system can demonstrate seemingly rational but undesirable behaviors, potentially causing harm. Alignment means embedding human values and constraints in AI's goals.

Interpretability

nterpretability addresses the challenge of explaining how an AI system arrives at its decisions or recommendations (Doshi-Velez & Kim, 2017; Lipton, 2018). Without sufficient transparency, users can struggle to trust or effectively collaborate with AI, particularly in high-stakes scenarios. By developing interpretable methods, designers enable humans to scrutinize, validate, and refine AI outputs, fostering better understanding and trust.

The updating problem

The updating problem arises when an AI’s objectives, models, or environments evolve over time, creating the need for ongoing alignment checks to ensure the system remains faithful to human intentions (Bostrom, 2014; Russell, 2019). If updates occur without maintaining or revisiting alignment, the AI may drift toward goals or behaviors that no longer match the team’s desired outcomes. Iterative, transparent monitoring and updates keep AI aligned throughout its lifecycle.

In the digital economy, data has become the lifeblood of technological innovation and economic growth. The advent of artificial intelligence (AI) has amplified the strategic importance of data, transforming it into a pivotal resource for decision-making, personalization, and automation. However, understanding the value of data requires an exploration of its multifaceted roles, its ethical implications, and the challenges of building trust to ensure its sustainable use. This section examines the critical importance of data in the AI era, leveraging insights from system theory and behavioral economics to provide actionable insights for professionals, particularly in marketing and business.

Data as the Foundation of AI

Artificial intelligence systems depend on vast amounts of data to learn, adapt, and make predictions. In essence, data is both the input and output of AI processes. Machine learning models, for example, use training datasets to identify patterns and improve performance over time. This iterative process makes data not just a static resource but a dynamic driver of innovation.

The value of data lies in its ability to unlock actionable insights.

Contextual and Relational Value

Data’s value is not absolute; it is highly contextual and relational (Leonelli, 2016). The system theoretical perspective emphasizes that the worth of data depends on how it is integrated into broader systems of use (Mayer-Schönberger & Cukier, 2013). For example, data on consumer preferences becomes valuable when combined with demographic data and applied to predictive algorithms (Davenport & Patil, 2012). Similarly, behavioural economics underscores the importance of framing and context in data interpretation (Kahneman & Tversky, 1979). The same data can yield vastly different outcomes depending on how it is utilized and for what purpose.

In marketing, raw data must be transformed into meaningful information through analysis and interpretation (Provost & Fawcett, 2013). Companies must focus on the “right” data—that aligns with their strategic objectives and can be ethically leveraged to achieve desired outcomes.

The Ethical and Privacy Challenges

As the value of data increases, so do concerns about privacy and ethics (Lyon, 2014). The “privacy paradox” – individuals express concern about their data privacy but still engage in behaviours that expose their information – highlights the complexities of data trust (Norberg et al., 2007). In the age of AI, these concerns are magnified due to the scale and opacity of data processing (Zuboff, 2019).

Ethical data usage is critical for maintaining trust (Mittelstadt et al., 2016). Organizations must navigate challenges such as data bias, consent, and transparency. When trained on biased datasets, AI systems can perpetuate inequities, leading to reputational damage and loss of consumer trust (O’Neil, 2016). Ensuring ethical data practices involves implementing robust governance frameworks, defining guardrails, fostering transparency, and engaging in open dialogues with stakeholders (Floridi, 2018).

Trust as an Enabler of Data Sharing

From a marketing perspective, trust can be cultivated through transparency and reciprocity. Consumers are more likely to share data when they perceive a fair exchange of value, such as personalized experiences or improved services. Ethical governance and adherence to regulations, like the General Data Protection Regulation (GDPR), further reinforce trust by demonstrating accountability.

The following elements are essential components of a data strategy tailored for the AI era:

Data Quality over Quantity

While big data often garners attention, quality data is more critical for AI applications. High-quality data ensures accuracy and relevance, reducing the risk of biases and errors in AI outputs. Organizations should invest in data cleaning and validation processes to maximize the utility of their datasets.

Ethical Data Governance

Establishing ethical guidelines and frameworks for data use is essential. This includes securing informed consent, anonymizing sensitive information, and conducting regular audits to ensure compliance with privacy laws and ethical standards.

Building Collaborative Ecosystems

Sharing data across organizational boundaries can create new opportunities for innovation. Collaborative ecosystems, supported by trust and mutual agreements, allow companies to pool resources and achieve shared goals, such as enhancing customer experiences or developing AI-driven solutions.

Leveraging AI for Data Insights

AI itself can be used to improve data management and analytics. Machine learning models can identify patterns, detect anomalies, and generate predictions that enhance decision-making processes. Marketing professionals can use AI tools to segment audiences, optimize campaigns, and measure ROI effectively.

Investing in Explainability and Transparency

Ensuring that AI systems provide interpretable and explainable outputs is critical for maintaining user trust. Marketers and business leaders should prioritize tools that make AI decision-making transparent, allowing stakeholders to understand and trust the processes behind data-driven recommendations.

From a system theoretical approach, trust is seen as a mechanism to reduce complexity and enable interaction within uncertain environments (Luhmann, 1979). Niklas Luhmann’s framework conceptualizes trust as an interplay between trustors (users) and trustees (systems), mediated by contextual and relational factors (Kramer, 1999). In AI, this translates to a network of trust relationships spanning end-users, developers, organizations, and broader societal systems (Castelfranchi & Falcone, 2010).

Systemism provides the best social sciences framework for examining trust relationships and their components (Bunge, 2000). Trust relationships function as systems with interconnected elements (Hall & Fagen, 1968), helping to reduce environmental complexity to manageable levels (Cordini, 2007). These elements include applications, processes, people, information, and services (Sillitto et al., 2018).

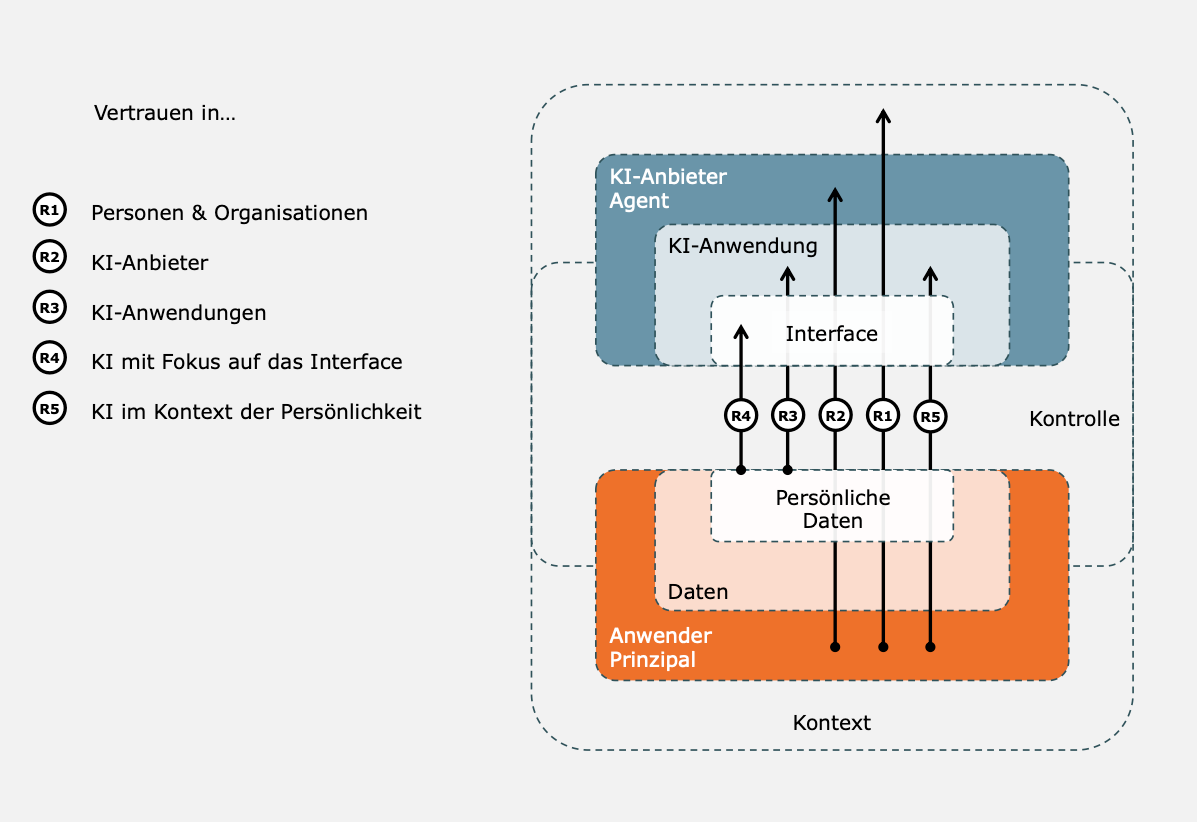

The ‘Foundational Trust Framework’ by Lukyanenko et al. (2022) suggests that all participants in trust relationships are systems, implying that trust definitions vary by context. Three key stakeholder groups are central to AI trust relationships: end users, subject matter experts, and society (Lockey et al., 2021). Following Luhmann’s systems theory (1997), users and AI caretakers form a subsystem within society. This creates a network of trust from individual relationships (Söllner et al., 2016), where systems at different levels influence each other (Bunge, 2006; Lukyanenko et al., 2022). A control system supports this framework, ensuring digital sovereignty over data assets (Zillner et al., 2021), resulting in five significant trust relationships within AI systems.

R1 - Trust in Persons and Organizations:

This relationship is built on the willingness to be vulnerable based on the belief in another party’s competence, benevolence, and integrity. Trust in individuals and organizations is foundational and often influenced by cultural, legal, and institutional support systems. For instance, trust in a company like Google or Microsoft can drive user engagement with AI tools.

R2 - Trust in Agents of the Digital Economy:

This involves trust in the platforms and intermediaries that facilitate digital transactions. It includes confidence in their ability to manage data responsibly and provide value. Examples include trust in platforms like Amazon or Airbnb, which act as intermediaries between users and services.

R3 - Trust in AI Systems and Applications:

This centres on trust in AI systems’ functionality, reliability, and fairness. Users often rely on AI applications to make decisions without understanding the underlying algorithms. Trust here is built through transparency, explainability, and consistent performance.

R4 - Trust in the Interface:

The interface is the first point of contact between users and AI systems. An intuitive, user-friendly interface can significantly enhance initial trust. Features like human-like communication, straightforward visual design, and accessible controls are critical in this relationship.

R5 - Trust in the Context of Personality:

This trust relationship is deeply tied to individual differences, including personality traits and preferences. AI systems adapting to user needs, respecting privacy, and demonstrating sensitivity to cultural and personal contexts foster stronger trust bonds.

Key components of this systemic view on trust relationships:

Multi-Level Trust Systems: Trust operates across hierarchical levels, from individual interactions with AI interfaces to organizational and societal trust in regulatory frameworks (Coleman, 1990). Each level influences the others, creating a dynamic system where trust flows both vertically and horizontally.

Emergence and Feedback Loops: Trust evolves through iterative interactions, where positive experiences reinforce trust, while breaches trigger scepticism and call for accountability (Lewicki et al., 1998). For example, an AI system that consistently delivers accurate and fair results fosters trust, while errors or biases necessitate corrective action to restore confidence (Muir, 1994).

Control and Governance: Effective trust systems require robust oversight mechanisms, including ethical guidelines, transparency protocols, and third-party audits (Power, 2007). These measures ensure accountability and align AI development with societal values. Luhmann’s perspective emphasizes that trust mechanisms reduce complexity by providing a framework for predictable interactions, even in high-uncertainty environments.

Conceptualizations of trust in the context of the respective relationship

Conceptualizations of trust in the context of the relationship.

Selected literature.

R1. Trust in persons & organizations

A party’s willingness to be vulnerable to the actions of another party. This willingness is based on the expectation that the other party will take a certain action that is important to the trustor, regardless of the possibility of being able to monitor or control that other party (Mayer et al., 1995).

Mayer et al., 1995;

Giffin, 1967;

Deutsch, 1976;

Rousseau et al., 1998;

Fukuyama, 1995.

R2. Trust in agents of the digital economy

Implicit contractual relationship between trustor and trustee as a mechanism to stabilize uncertain expectations (Ripperger, 2003).

Ripperger, 2003;

McKnight et al. 1998; 2002;

Gefen et al., 2003;

Koufaris & Hampton-Sosa, 2004;

Dinev & Hart, 2006.

R3. Trust in AI systems and applications

Human, mental, and physiological process that takes into account the characteristics of a specific AI-based system, a class of such systems, or other systems in which it is embedded or with which it interacts, to control the extent and parameters of interaction with these systems (Lukyanenko, 2022).

Lukyanenko, 2022;

Glikson & Woolley, 2020;

Muir, 1994;

Söllner et al., 2012;

Lee & Moray, 1992;

Hoff & Bashir, 2015;

Choung, 2022;

Thiebes et al., 2021.

R4. Trust in the interface

Utilization of relationship-oriented intelligence and its sociocultural reference points for designing a trust-promoting human-machine interface (Bickmore & Cassell, 2001).

Bickmore & Cassell, 2001;

Zierau, 2021;

Vössing et al., 2022;

Van Pinxteren et al., 2023.

R5: Trust in the context of personality:

Personality traits, along with other individual characteristics such as age, culture and gender, determine an individual’s disposition towards trust (Hoff & Bashir, 2015).

Riedl, 2022;

McKnight et al., 2002;

Szalma & Taylor, 2011.

A detailed compilation of the dimensions that describe the basis of trust can be found in Gefen (2003) or J. D. Lee and See (2004), among others.

System theoretical insights underscore the need for a holistic understanding of trust relationships, accounting for emergent complexities and multi-level interactions. By implementing strategies centered on transparency, adaptability, reciprocity, and ethical governance, organizations can build resilient trust systems that empower users and ensure sustainable access to data.

Transparency and Explainability: Providing clear, accessible explanations of how AI systems operate fosters user confidence (Miller, 2019; Winfield & Jirotk, 2018). Transparency extends to data governance, algorithmic decision-making, and potential limitations. For instance, interactive dashboards and visualizations can help users understand AI processes and outcomes, bridging the gap between technical complexity and user comprehension (Gunning & Aha, 2019; Vössing et al., 2022). Companies like Google and IBM have pioneered explainable AI frameworks to address these challenges.

Adaptive Design: AI systems should be designed to account for user diversity, adapting to individual preferences and contextual needs (Følstad & Brandtzaeg, 2017). For instance, interfaces employing proportional anthropomorphism—human-like traits—can enhance trust without misleading users about AI capabilities. Adaptive systems should also consider cultural nuances and accessibility to ensure inclusivity (Isbister, 2016). Examples include voice assistants that tailor their tone and language to user preferences, improving engagement and trust.

Reciprocity and Value Exchange: Users are more willing to share data when they perceive tangible benefits (Acquisti et al., 2015). Organizations should emphasize fair exchanges, such as enhanced personalization or improved services while ensuring data privacy. Initiatives like loyalty programs or data dividends can reinforce the perception of mutual benefit. This approach is particularly relevant in addressing the privacy paradox, where users are willing to trade data for perceived value but remain wary of misuse.

Ethical Governance: Adopting robust ethical frameworks and regulatory compliance—such as the EU AI Act—signals a commitment to accountability (Mittelstadt, 2019). Certification schemes and audits can serve as trust signals, reinforcing user confidence. Ethical governance also entails proactively addressing biases and ensuring equitable outcomes across diverse demographics (O’Neil, 2016). The role of independent oversight bodies in monitoring compliance is critical to maintaining public trust.

Digital Nudging: Subtle interventions in user interfaces can guide decisions that align with privacy preferences and trust-building (Thaler & Sunstein, 2008). For example, framing consent options transparently can reduce cognitive overload and encourage informed choices. Nudging strategies should be designed to respect autonomy while promoting responsible data sharing. Behavioural economics insights can inform the design of these interventions, ensuring they are effective without being manipulative.

However, digital nudging raises critical ethical concerns, particularly concerning the EU AI Act’s strict guidelines on manipulative interventions (Yeung, 2017). While behavioural interventions can potentially improve user decision-making, they risk infringing on individual autonomy and potentially constituting a form of algorithmic manipulation (Metzinger, 2019). Careful design requires maintaining transparency, ensuring genuine user consent, and avoiding exploitative psychological mechanisms that could compromise user agency (Sunstein, 2016). Ethical nudging must prioritize user welfare, provide clear opt-out mechanisms, and be subject to rigorous regulatory oversight to prevent potential misuse (Acquisti et al., 2017).

Community Engagement: Actively involving stakeholders in AI development fosters trust by demonstrating responsiveness to societal concerns (Sætra, 2021). Public consultations, open-source initiatives, and collaborative research can build a sense of shared ownership and accountability. This participatory approach aligns with broader trends toward democratizing technology development, ensuring it reflects diverse perspectives and needs.

Ultimately, fostering trust in AI is not just a technological challenge but a societal imperative, shaping the future of human-machine collaboration. As AI continues to integrate into daily life, sustained efforts to address trust barriers will determine the extent to which this transformative technology can achieve its full potential.

Blind reliance on AI is never advisable; instead, fostering informed trust enables society to leverage AI’s potential while mitigating its risks.

References Chapter 4:

Amodei, D., Olah, C., Steinhardt, J., Christiano, P., Schulman, J., & Mané, D. (2016). Concrete problems in AI safety. arXiv preprint arXiv:1606.06565

Bostrom, N. (2014). Superintelligence: Paths, dangers, strategies. Oxford University Press.

Bunge, M. (2000). Systemism: the alternative to individualism and holism. The Journal of Socio-Economics, 29(2), 147–157. https://doi.org/10.1016/s1053-5357(00)00058-5

Bunge, M. (2006). Chasing Reality. In the University of Toronto Press eBooks. https://doi.org/10.3138/9781442672857

Castelfranchi, C., & Falcone, R. (2010). Trust theory: A socio-cognitive and computational model. John Wiley & Sons.

Coleman, J. S. (1990). Foundations of social theory. Harvard University Press.

Cordini, M. (2007). Vertrauen im Prozess komplexer Systeme: Zur Führungsfunktion des Mittelmanagements als Hauptträger personellen Vertrauens [Dissertation]. Gottfried Wilhelm Leibniz Universität Hannover.

Corritore, C. L., Kracher, B., & Wiedenbeck, S. (2003). On-line trust: Concepts, evolving themes, a model. International Journal of Human-Computer Studies, 58(6), 737–758. https://doi.org/10.1016/S1071-5819(03)00041-7

Davenport, T. H., & Patil, D. J. (2012). Data scientist: The sexiest job of the 21st century. Harvard Business Review, 90(10), 70–76.

Doshi-Velez, F., & Kim, B. (2017). Towards a rigorous science of interpretable machine learning. arXiv preprint arXiv:1702.08608

Floridi, L. (2018). Soft ethics, the governance of the digital, and the digital governance of the physical. Philosophy & Technology, 31, 341–348. https://doi.org/10.1007/s13347-018-0313-8

Floridi, L., & Cowls, J. (2019). A unified definition of artificial intelligence. Minds and Machines, 29(4), 495–514. https://doi.org/10.1007/s11023-019-09579-2

Følstad, A., & Brandtzaeg, P. B. (2017). Chatbots and the new world of HCI. interactions, 24(4), 38–42. https://doi.org/10.1145/3085558

Gunning, D., & Aha, D. W. (2019). DARPA’s explainable artificial intelligence (XAI) program. AI Magazine, 40(2), 44–58.

https://doi.org/10.1609/aimag.v40i2.2850

Hall, A. D., & Fagen, R. E. (1968). Definition of System. In W. Buckley (Ed.), Systems Research for Behavioral Science (S. 81–92). Routledge.

Hancock, P. A., et al. (2020). A meta-analysis of factors affecting trust in human-automation interaction. Human Factors, 62(7), 1175–1194. https://doi.org/10.1177/0018720819870942

Hoff, K. A., & Bashir, M. (2014). Trust in automation: Integrating empirical evidence on factors that influence trust. Human Factors, 57(3), 407–434. https://doi.org/10.1177/0018720814547570

Isbister, K. (2016). How the design of social technologies shapes social interaction. interactions, 23(5), 50–53. https://doi.org/10.1145/2973565

Kahneman, D. (2011). Thinking, fast and slow. Farrar, Straus and Giroux.

Kahneman, D., & Tversky, A. (1979). Prospect theory: An analysis of decision under risk. Econometrica, 47(2), 263–291. https://doi.org/10.2307/1914185

Kramer, R. M. (1999). Trust and distrust in organizations: Emerging perspectives, enduring questions. Annual Review of Psychology, 50(1), 569–598. https://doi.org/10.1146/annurev.psych.50.1.569

Lee, J. D., & Moray, N. (1992). Trust, control strategies and allocation of function in human-machine systems. Ergonomics, 35(10), 1243–1270. https://doi.org/10.1080/00140139208967392

Leonelli, S. (2016). Data-centric biology: A philosophical study. University of Chicago Press.

Lewicki, R. J., et al. (1998). Trust and distrust: New relationships and realities. Academy of Management Review, 23(3), 438–458. https://doi.org/10.5465/amr.1998.926620

Lipton, Z. C. (2018). The mythos of model interpretability. Communications of the ACM, 61(10), 36–43. https://doi.org/10.1145/3233231

Lockey, S., Gillespie, N., Holm, D. & Someh, I. A. (2021). A Review of Trust in Artificial Intelligence: Challenges, Vulnerabilities and Future Directions. In Proceedings of the Annual Hawaii International Conference on System Sciences. 5463-5472, https://doi.org/10.24251/hicss.2021.664

Luhmann, N. (1979). Trust and power. John Wiley & Sons.

Luhmann, N. (1997). Die Gesellschaft der Gesellschaft [The Society of Society]. Suhrkamp.

Lukyanenko, R., Maass, W. & Storey, V. C. (2022). Trust in artificial intelligence: From a Foundational Trust Framework to emerging research opportunities. Electronic Markets, 32(4), 1993–2020. https://doi.org/10.1007/s12525-022-00605-4

Lyon, D. (2014). Surveillance, Snowden, and big data: Capacities, consequences, critique. Big Data & Society, 1(2), 1–13. https://doi.org/10.1177/2053951714541861

Mayer, R. C., Davis, J. H., & Schoorman, F. D. (1995). An integrative model of organizational trust. Academy of Management Review, 20(3), 709–734. https://doi.org/10.2307/258792

Mayer-Schönberger, V., & Cukier, K. (2013). Big data: A revolution that will transform how we live, work, and think. Houghton Mifflin Harcourt.

McKnight, D. H., & Chervany, N. L. (2001). What trust means in e-commerce customer relationships: An interdisciplinary conceptual typology. International Journal of Electronic Commerce, 6(2), 35–59.

Metzinger, T. (2019). Ethics of artificial intelligence: Some challenges and opportunities. Philosophy & Technology, 32(4), 673–690. https://doi.org/10.1007/s13347-019-00351-x

Miller, T. (2019). Explanation in artificial intelligence: Insights from the social sciences. Artificial Intelligence, 267, 1–38. https://doi.org/10.1016/j.artint.2018.07.007

Mittelstadt, B. (2019). Principles alone cannot guarantee ethical AI. Nature Machine Intelligence, 1(11), 501–507. https://doi.org/10.1038/s42256-019-0114-4

Mittelstadt, B., et al. (2016). Ethics of algorithms: Mapping the debate. Big Data & Society, 3(2), 1–21. https://doi.org/10.1177/2053951716679679

Muir, B. M. (1994). Trust in automation: Part I. Theoretical issues. Ergonomics, 37(10), 1573–1593. https://doi.org/10.1080/00140139408964957

Nichols, T. M. (2017). The death of expertise: The campaign against established knowledge and why it matters. Oxford University Press.

Norberg, P. A., et al. (2007). The privacy paradox: Personal information disclosure intentions versus behaviors. Journal of Consumer Affairs, 41(1), 100–126.

O’Neil, C. (2016). Weapons of math destruction: How big data increases inequality and threatens democracy. Crown.

Pavlou, P. A. (2003). Consumer acceptance of electronic commerce: Integrating trust and risk with the technology acceptance model. International Journal of Electronic Commerce, 7(3), 101–134.

Piketty, T. (2020). Capital and ideology. Harvard University Press.

Power, M. (2007). Organized uncertainty: Designing a world of risk management. Oxford University Press.

Provost, F., & Fawcett, T. (2013). Data science and its relationship to big data and data-driven decision making. Big Data, 1(1), 51–59. https://doi.org/10.1089/big.2013.1508

Russell, S. (2019). Human compatible: Artificial intelligence and the problem of control. Viking.

Sætra, H. S. (2021). The ethics of AI ethics: An evaluation of guidelines. Minds and Machines, 31, 535–571. https://doi.org/10.1007/s11023-021-09540-2

Sillitto, H., Griego, R. M., Arnold, E., Dori, D., Martin, J. F., McKinney, D., Godfrey, P., Krob, D. & Jackson, S. A. (2018). What do we mean by “system”? – System Beliefs and Worldviews in the INCOSE Community. INCOSE International Symposium, 28(1), 1190–1206. https://doi.org/10.1002/j.2334-5837.2018.00542.x

Söllner, M., Hoffmann, A. & Leimeister, J. M. (2016). Why different trust relationships matter for information systems users. European Journal of Information Systems, 25(3), 274–287. https://doi.org/10.1057/ejis.2015.17

Sunstein, C. R. (2016). The ethics of nudging. Yale Journal on Regulation, 33, 413–450.

Sunstein, C. R. (2017). #Republic: Divided democracy in the age of social media. Princeton University Press.

Thaler, R. H., & Sunstein, C. R. (2008). Nudge: Improving decisions about health, wealth, and happiness. Yale University Press.

Tschopp, M., & Ruef, M. (2020). AI & Trust -Stop asking how to increase trust in AI. Available: https://www.researchgate.net/publication/339530999_AI_Trust_-Stop_asking_how_to_increase_trust_in_AI [2025, January 31]

Vössing, M., Kühl, N., Lind, M., & Satzger, G. (2022). Designing transparency for effective human–AI collaboration. Information Systems Frontiers, 24(3), 877–895. http://dx.doi.org/10.1007/s10796-022-10284-3

Winfield, A. F. T., & Jirotka, M. (2018). Ethical governance is essential to building trust in robotics and artificial intelligence systems. Philosophical Transactions of the Royal Society A: Mathematical, Physical and Engineering Sciences, 376(2133), 20180085.

https://doi.org/10.1098/rsta.2018.0085

Yeung, K. (2017). ‘Hypernudge’: Big Data as a mode of regulation by design. Information, Communication & Society, 20(1), 118–136. https://doi.org/10.1080/1369118X.2016.1186713

Zerilli, J., et al. (2019). Algorithmic decision-making and the control problem. Minds and Machines, 29(4), 555–578. https://doi.org/10.1007/s11023-019-09513-6

Zillner, S., Gómez, J. A., Robles, A. I., Hahn, T. P., Bars, L. L., Petkovic, M. & Curry, E. (2021). Data Economy 2.0: From Big Data Value to AI Value and a European Data Space. In Springer eBooks (S. 379–399). https://doi.org/10.1007/978-3-030-68176-0_16

Zuboff, S. (2019). The age of surveillance capitalism. PublicAffairs.