Chapter 1: Learn how pervasive consumer concerns about data privacy, unethical ad-driven business models, and the imbalance of power in digital interactions underscore the need to build trust through transparency and regulation.

Chapter 8: Learn how AI’s rapid advancement and widespread adoption present both opportunities and challenges, requiring trust and ethical implementation for responsible deployment. Key concerns include privacy, accountability, transparency, bias, and regulatory adaptation, emphasizing the need for robust governance frameworks, explainable AI, and stakeholder trust to ensure AI’s positive societal impact.

Content in this chapter

- Defining Trust

- Introducing the Iceberg Trust Model

- Development Methodology

- The Contextual Moderation Layer

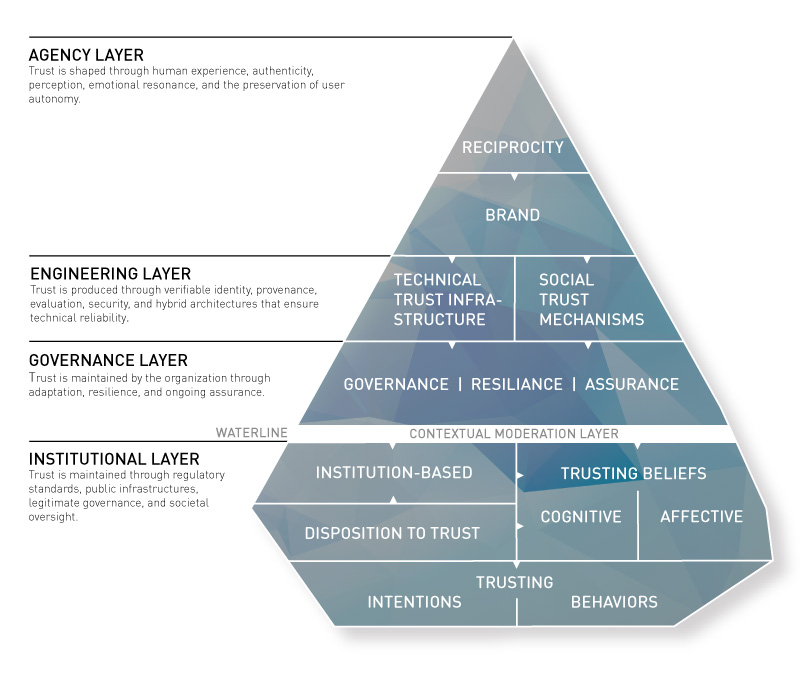

- 1. Above the Waterline: The Agency Layer

- 2. Above the Waterline: The Engineering Layer

- 3. The Governance Layer

- 4. Below the Waterline: The Institutional Layer

- The Dynamic Process Layer

- Empirical Validation

- Data Context

Today’s digital economy has an enormous potential to transform most of the aspects of our lives. The World Wide Web removes barriers to market entry, develops new, disruptive business models and eventually dissolves traditional values such as brick-and-mortar shops. Technology breaks the barriers between organizations, countries, and time zones. The way to modernity is characterized by a social change that “disembeds” individuals out of their traditional social relationships and localized interaction context (Giddens, 1995).

Every day, fresh start-up companies develop new perspectives on issues of our daily lives and propose often disrupting solutions. The consumer is faced with technologies and social concepts (such as the sharing economy) that are new and unknown. Digital trust disengages itself to an ever larger extent from the known concept of trust in persons and generalizes in institution-based trust (Luhmann, 1989). The iceberg approach to digital trust pays attention to different components of trust and provides a framework that allows marketing professionals to understand how trust is engendered.

Ever since the World Wider Web became a global phenomenon, scientists from a wide range of disciplines try to make the trust construct comprehensible. These studies all build on the broad scientific work from a more analogue world. Deutsch has developed one of the most fundamental definitions for trust (1962): He defines a framework in which an individual faces two aspects. First, the individual has a choice between multiple options that result in outcomes that it perceives as either positive or negative. Second, the individual acknowledges that the actual result depends on the behaviour of another person. Deutsch also mentions that the trusting individual perceives the effect of a bad result as stronger than the effect of a positive outcome. This corresponds to the findings of the prospect theory discussed in chapter 2.

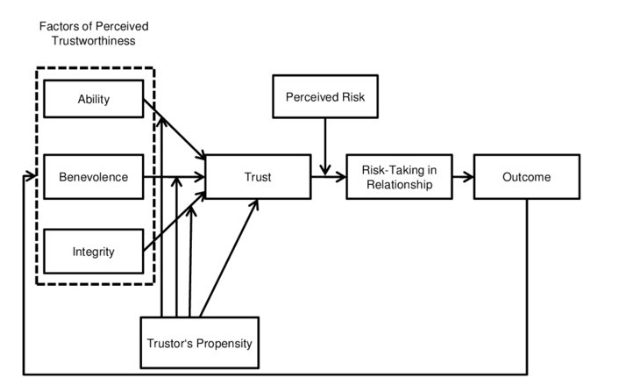

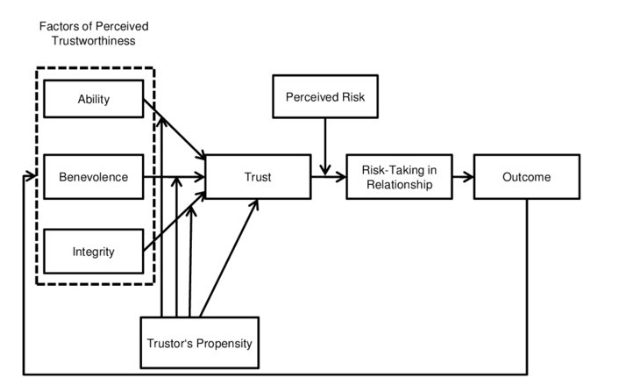

Trust is the willingness of a party to be vulnerable to the actions of another party based on the expectation that the other will perform a particular action important to the trustor, irrespective of the ability to monitor or control that other party“.

(Mayer et al.)

Thus, trust is built if a person assumes the desired beneficial result is more likely to occur than a bad outcome. In this context, there is no possibility to influence the process. The following example illustrates this: a mother leaves her baby to a babysitter. She is aware that the consequences of her choice depend heavily on the behaviour of the babysitter. In addition, she knows that the damage from a bad outcome of this engagement carries more weight than the benefit of a good outcome. Important factors in the trust equation are missing control, vulnerability and the existence of risk (Petermann, 1985).

Multiple options and diverse scenarios lead to ambiguity and risk. According to Lumann, individuals must eventually reduce complexity to decide in such situations. Trust is a mechanism that reduces social complexity. This context is best captured in the definition of trust developed by Mayer, Davis and Schoorman (1995: 712).

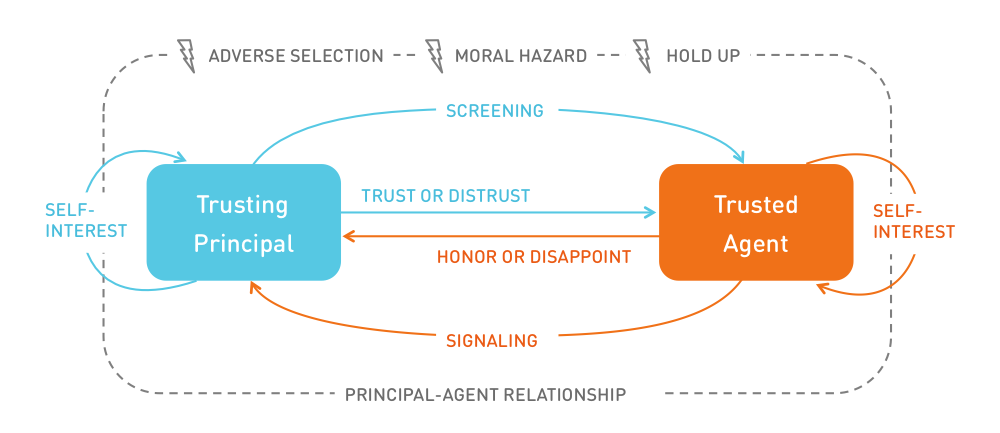

Trust can be an efficient help to overcome the agency dilemma. In economics, the principal-agent problem describes a situation where a person or entity (the agent) acts on behalf of another person (the principal). Due to information asymmetry, which is omnipresent in digital markets, the agent can either act in the interest of the principal or not by acting, for example, selfishly. Trust can solve such problems by absorbing behavioural risks (Ripperger, 1998). Screening and signalling activities are often inefficient in situations where information asymmetry exists due to high information costs. Trust can reduce such agency costs (including imminent utility losses). It can increase the agent’s intrinsic motivation to act in the principal’s interest.

You must trust and believe in people, or life becomes impossible.

(Anton Chekhov)

A trust relationship, as such, can be seen as a principal-agent relationship. The relationship between a trusting party and a trusted party is built on an implicit contract. Trust is provided as a down payment by the principal. The accepting agent can either honour this ex-ante payment or disappoint the principal.

According to the principle-agent theory a trusting party faces three risks:

Adverse Selection: When selecting an agent, the principal faces the risk of choosing an unwanted partner. Hidden characteristics of an agent or their service are not transparent to the principal before the contract is made. This leaves room for the agent to act opportunistically.

Moral Hazard: If information asymmetry occurs after the contract has been closed (ex post), the risk of moral hazard arises. The principal has insufficient information about the exertion level of the agent who fulfills the service. External effects, such as environmental conditions, can influence the agent’s actions.

Hold Up: This type of risk is particularly relevant for the discussion about the use of personal data. It describes the risk if the principal makes a specific investment, such as providing sensitive data. After closing the contract, the agent can abuse this one-sided investment to the detriment of the principal. The subjective insecurity about the agent’s integrity stems from potentially hidden intentions.

The described risks can be reduced through signalling and screening activities. Signalling is a strategy by which agents communicate their nature and authentic character. The provision of certification and quality seals is used to signal activities. On the other hand, a principal seeks to identify an agent’s true nature through screening activities. However, screening is only effective if signals are valid (the agent actually owns this characteristic) and if the absence of such a signal indicates the lack of this trait.

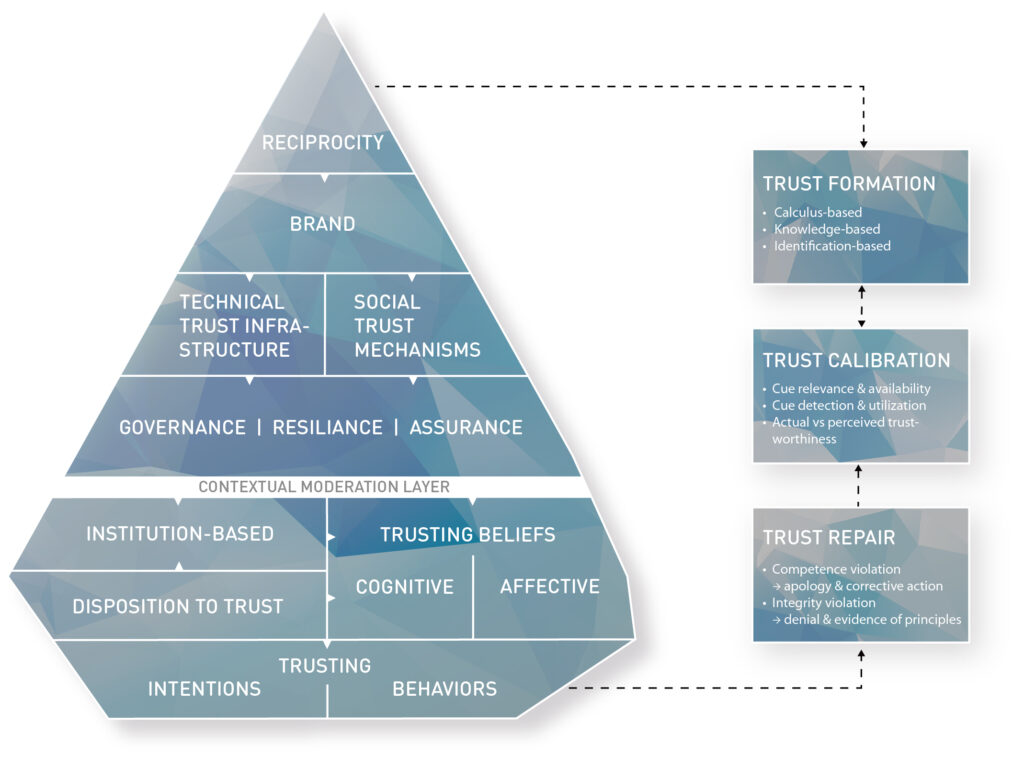

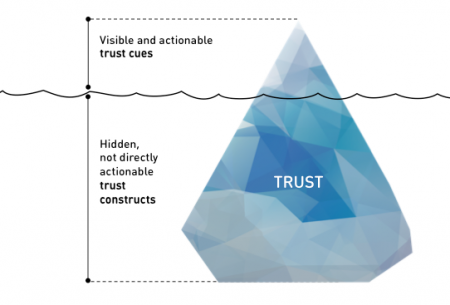

Our trust model resembles the shape of an iceberg.

The iceberg metaphor works in many different ways:

First of all, trust must be seen as a precious good. It is hard to build, but it can be lost very quickly. This makes it both a key differentiator to win against the competition and a potential pitfall that can easily destroy organizations. The Volkswagen emissions scandal has demonstrated how quickly trust is lost. It showed that even strong brands from traditional brick-and-mortar businesses can become severely damaged. The Internet accelerates this process. Bad news or reviews are spread at light speed around the globe, and once negligible pieces of information can cause harmful shitstorms to spiral out of control. Like an iceberg in the open sea, trust issues must be recognized early, and marketers must sail elegantly around such perils. On the other hand, trust is vital. Ongoing melting of the polar ice caps will inevitably raise sea levels and put coastal areas at risk. Similarly, if the trust factor is neglected, organizations miss out on potential business opportunities and risk being sunk by the competition.

Second, the sheer size of an iceberg usually remains unknown to the observer. This is due to ice’s lower density than that of liquid water. Hence, only one-tenth of the volume of an iceberg is typically above the water level. This reflects that most of the determinants of trust are less known, not understood, or simply invisible. Moreover, it is difficult to manipulate those constructs. Trust, therefore, is often built when no one is looking. For companies, this means there are limited but essential options to address information asymmetry in digital markets.

Just like freezing water and forming an iceberg takes time, building trust usually takes time. The primary way to gain trust is to earn it by developing and nurturing relationships with customers and future prospects. Companies can and must have control over the quality and intensity of the customer experience if they want to influence the customer’s level of trust. Opportunities to shape the experience exist at touchpoints in the customer decision lifecycle. This model, however, focuses on understanding and engendering initial trust.

How can the multi-disciplinary determinants of digital trust be organized into a unified conceptual framework that (a) distinguishes observable trust cues from latent psychological constructs, (b) is grounded in established trust theory across disciplines, (c) is operationalizable for assessment, and (d) accommodates the specific trust dynamics of AI systems?

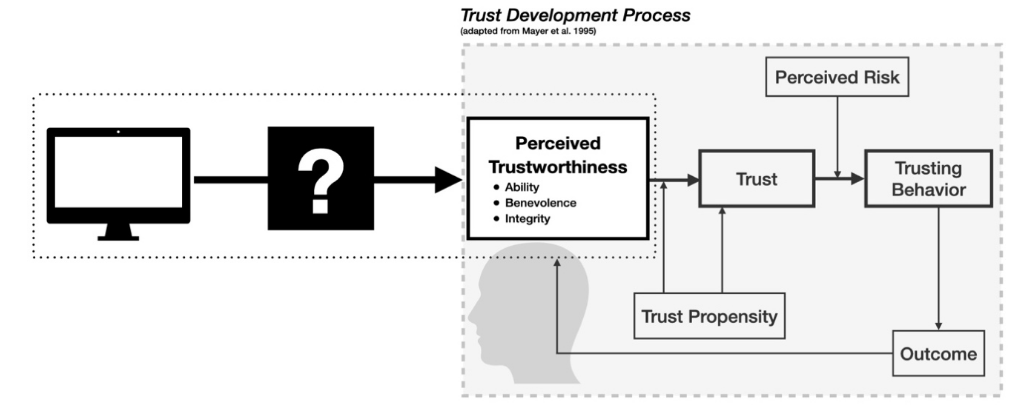

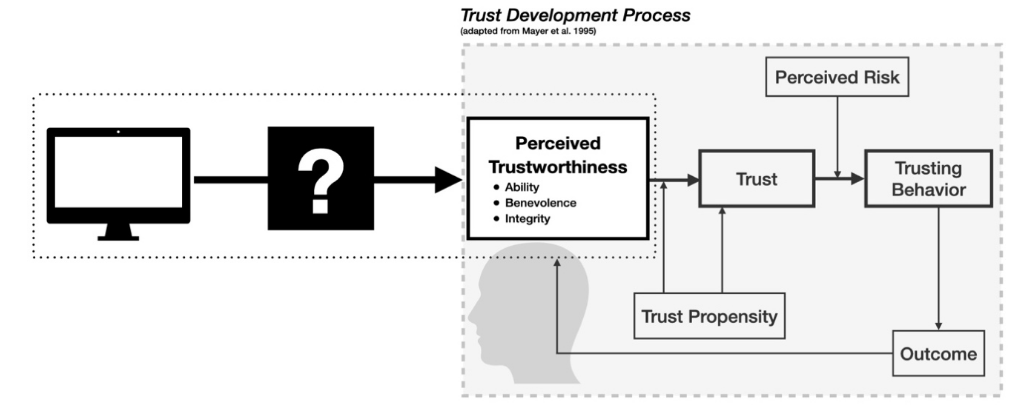

The Iceberg Trust Model was developed through a two-phase methodology.

Phase 1 used Design Science Research (Hevner et al., 2004) to construct the four-layer framework architecture and five design principles. The framework was evaluated through case analysis of real-world trust phenomena, including the Swiss e-ID referendum, AI marketing campaigns by Coca-Cola and Apple, and governance failures such as the Deloitte Australia incident.

Phase 2 applied a grounded-theory literature review (Wolfswinkel, Furtmueller, and Wilderom, 2013) to operationalize the framework into a structured, multi-level classification scheme. This is a literature-synthesis method that adapts the three-phase coding procedure of Strauss and Corbin (1998) to a defined interdisciplinary corpus, rather than classical grounded theory on fresh empirical fieldwork. A corpus of 34 primary sources spanning seven disciplines was coded in three phases: open coding produced approximately 250 trust-related concepts; axial coding consolidated these into 15 emergent categories; and selective coding integrated them around the core category of digital trust formation. The resulting ontology comprises 10 constructs and 124 trust cues. Theoretical saturation was reached at source 25 of 34.

Phase 1: Framework Architecture (Design Science Research)

The four-layer architecture (Agency, Engineering, Governance, Institutional) and five design principles were developed using Design Science Research (Hevner et al., 2004). Problem identification drew on systematic literature analysis spanning 1964 to 2025. Solution design synthesized systems theory (Luhmann, 1979), resilience engineering (Hollnagel et al., 2006), and sociotechnical systems research. Evaluation used case analysis of real-world trust phenomena: the Swiss e-ID referendum (institutional trust failure), contrasting AI marketing approaches by Coca-Cola and Apple (brand trust divergence), and governance failures exemplified by the Deloitte Australia incident (governance trust collapse).

Phase 2: Framework Construction (Grounded-Theory Literature Review)

The framework’s four layers were operationalized into a structured multi-level classification scheme of 10 constructs and 124 trust cues using a grounded-theory literature review (Wolfswinkel et al., 2013). The procedure adapts the three-phase coding process (Strauss and Corbin, 1998) to a defined interdisciplinary corpus. Throughout the rest of this chapter, the term framework refers to this classification scheme. The word ontology is reserved for future formalization per Gruber (1993), which is identified as follow-on work (see Limitations).

A corpus of 34 primary sources spanning seven disciplines (organizational psychology, information systems, economics, governance, resilience engineering, social psychology, and human-computer interaction) was coded through three sequential phases. Theoretical saturation was reached at source 25 of 34, after which no new categories emerged from the remaining 9 sources.

Limitations and Future Work

This chapter establishes construct validity through theoretical grounding and internal consistency checking. It does not claim predictive validity. Known limitations:

- Not a PRISMA systematic review. Source selection was purposive from the interdisciplinary trust literature. Any missed source would need to establish a 16th axial category to change the framework’s structure; the conceptual coverage plateau at source 25 provides a principled stopping criterion.

- Single-coder analysis. Coding was performed by a single researcher. The documented constant-comparison protocol and paradigm mapping provide partial mitigation, but formal inter-rater reliability cannot be reported. Expert panel validation (Delphi method) and independent dual coding of a random source subset are identified as priorities for future work.

- Role of the author’s prior work. The R1-R5 framework that organized the literature selection is articulated in prior work by the author (Glinz, 2015, 2025, 2026). These prior articulations are cited as announcement documents; the theoretical weight is carried by external, independently validated sources (Mayer et al., 1995; McKnight et al., 2002; Lankton et al., 2015; Sollner et al., 2016; and others). The R1-R5 framework is a selection scaffold, not a load-bearing theoretical contribution claimed by the present chapter.

- Governance construct derivation. The Governance, Resilience and Assurance construct was developed through a design-science synthesis (Hevner et al., 2004) of four external frameworks: NIST AI RMF 1.0 (2023), the EU AI Act (2024), the IIA Three Lines Model (2020), and resilience engineering (Hollnagel, Woods, and Leveson, 2006). Prior author contributions articulated the synthesis; the external frameworks carry the theoretical weight. Independent external validation of the governance cues is identified as a priority for future work.

- Conceptual coverage, not theoretical saturation. The corpus reached a conceptual coverage plateau at source 25 of 34. This is not saturation in the Glaserian sense, which would require iterative theoretical sampling driven by ongoing analysis.

- Construct, not formal ontology. The work is a multi-level classification scheme, not a formal ontology in the Gruber (1993) / Guarino and Welty (2002) sense. Formal axiomatization, OntoClean metaproperty analysis, and OWL/RDFS representation are identified as future work.

- No predictive validation. Whether the framework predicts real-world trust outcomes (user behavior, market impact, regulatory action) has not been tested and requires separate empirical investigation. Preliminary evidence from adjacent trust literature (Beldad, de Jong, and Steehouder, 2010; Kim, Ferrin, and Rao, 2008; Kaplan, Kessler, Brill, and Hancock, 2023, Human Factors) is consistent with the above/below waterline distinction but does not constitute direct validation of the present framework.

LLM-assistance disclosure. Draft composition and copy-editing of this chapter used Claude (Anthropic) and ChatGPT (OpenAI). All factual claims, source attributions, and analytical decisions were verified against primary sources by the author. Coding decisions were made by the author, not the LLM. The author takes full responsibility for the final text.

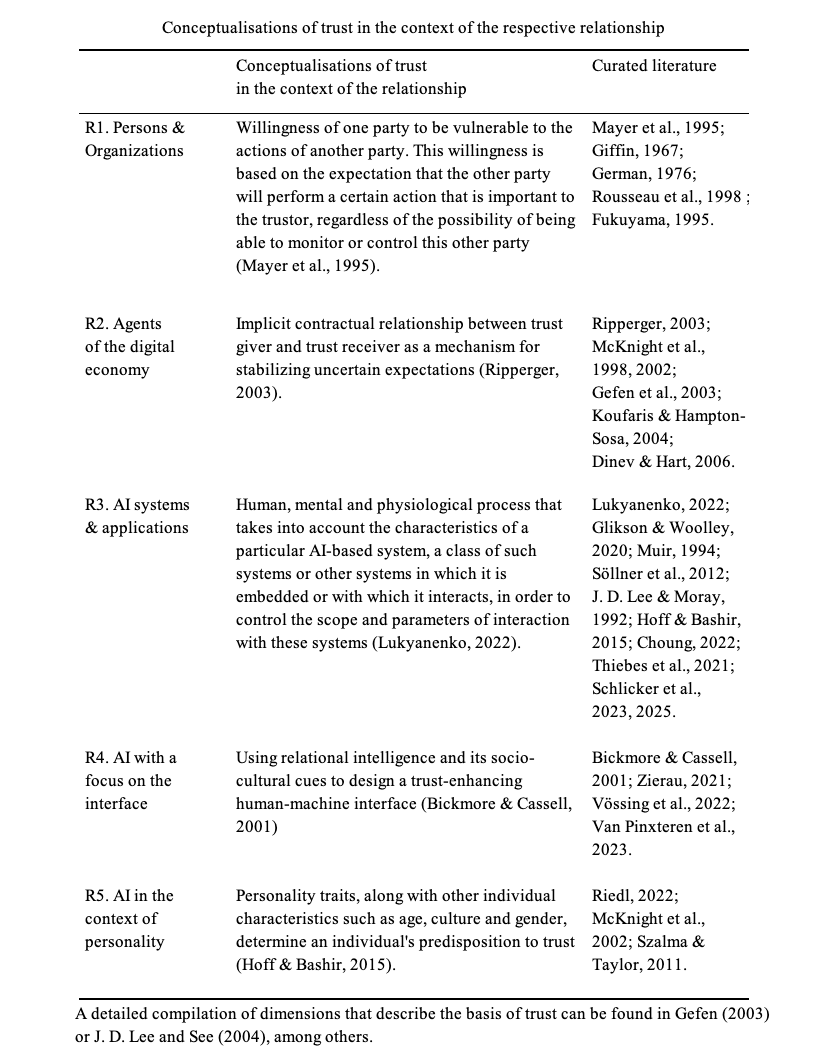

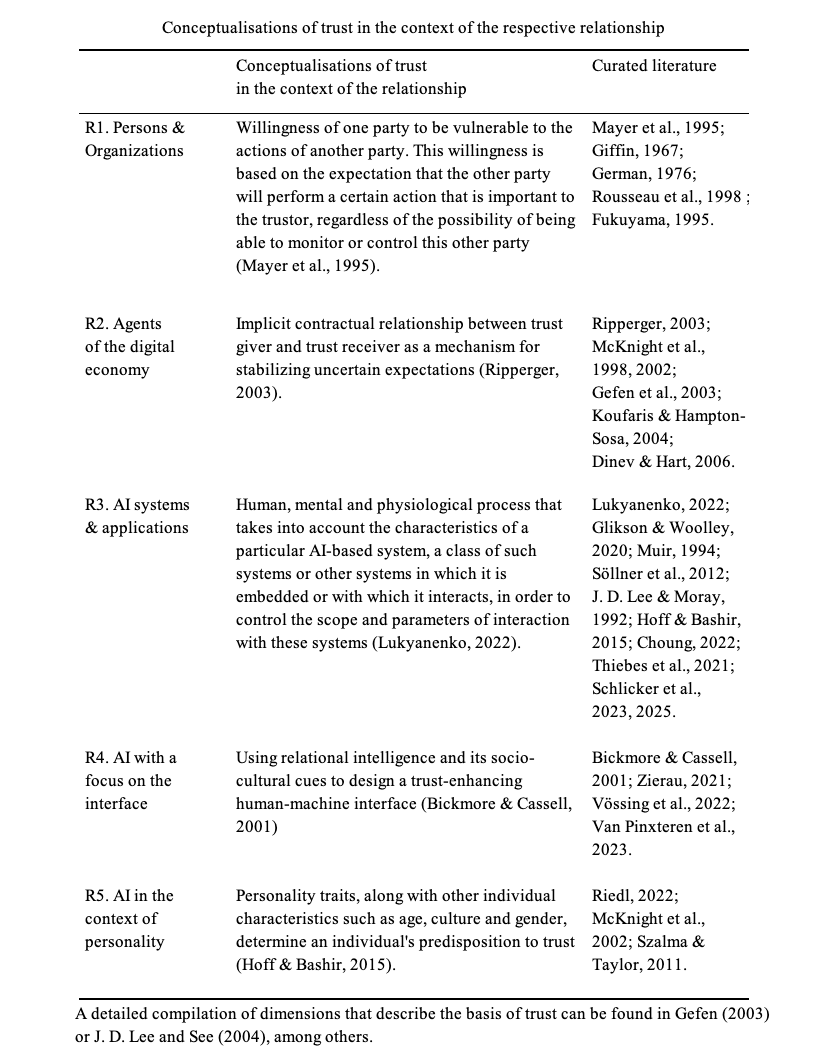

The Literature Corpus: 34 Sources Across Five Trust Conceptualizations

The model draws on 34 primary academic sources spanning 1964 to 2025, organized by five distinct trust conceptualizations (R1 through R5). This structure ensured that no single discipline dominated the analysis and that all types of digital trust relationships were represented. Each conceptualization required at least two sources: a definitional anchor and at least one empirical or review study. An additional 17 cross-cutting frameworks (EU AI Act, NIST AI RMF, IIA Three Lines Model, WEF Earning Digital Trust, ISACA DTEF, and others) were referenced for specific constructs but not coded line-by-line.

Trust in Persons and Organizations

8 sources

Foundational interpersonal trust: competence, benevolence, integrity, social exchange, signaling.

Mayer et al. (1995), McAllister (1995), Blau (1964), Spence (1973), Fukuyama (1995), Rousseau et al. (1998), Giffin (1967), Deutsch (1976).

Trust in Digital Economy Agents

10 sources

E-commerce trust typology, institution-based trust, empirical cue validation.

McKnight et al. (2002), Hoffmann et al. (2014), Gefen et al. (2003), Dinev and Hart (2006), Pavlou and Gefen (2004), Ripperger (2003), McKnight et al. (1998), Gefen (2000), Koufaris and Hampton-Sosa (2004), Hendrikx et al. (2015).

Trust in AI Systems

10 sources

AI governance, resilience engineering, trustworthiness assessment (TrAM).

Schlicker et al. (2025a, 2025b), Hollnagel et al. (2006), NIST (2023), Glikson and Woolley (2020), Lukyanenko et al. (2022), Muir (1994), Hoff and Bashir (2015), Choung et al. (2022), Thiebes et al. (2021).

Trust in the Interface

4 sources

Relational intelligence, rapport-building, anthropomorphic trust activation.

Bickmore and Cassell (2001), Zierau et al. (2021), Vossing et al. (2022), Van Pinxteren et al. (2019).

Trust and Personality

2 dedicated + 4 cross-contributing

Individual differences, dispositional trust, personality-trust links.

Riedl (2022), Szalma and Taylor (2011). Cross-contributing: McKnight et al. (2002), Hoffmann et al. (2014), Hoff and Bashir (2015).

The Coding Process: Three Sequential Phases

Open Coding

Each of the 34 primary sources was read in full. For every trust-relevant concept encountered, a code was created with a label, definition, source passage, and discipline of origin. This process produced approximately 250 discrete trust-related concepts across seven disciplinary domains.

Axial Coding

Concepts were compared pairwise through constant comparison (Glaser and Strauss, 1967) and grouped into categories based on shared properties and functional relationships. Each category was mapped using Strauss and Corbin’s paradigm model (causal conditions, context, intervening conditions, action strategies, consequences). This phase produced 15 emergent categories.

Selective Coding

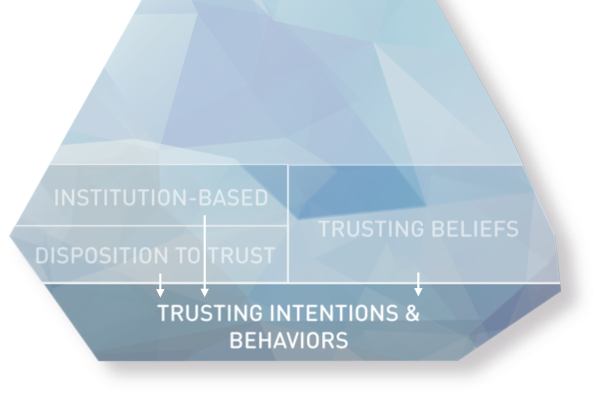

All 15 categories were integrated around the core category of “digital trust formation in sociotechnical systems.” This integration revealed a fundamental distinction: some categories describe observable signals (above the waterline), others describe latent psychological states (below the waterline), one functions as an environmental moderator (the water), and two describe temporal processes (the currents).

From 15 Categories to 10 Constructs

The 15 axial categories were consolidated into the framework’s final architecture through nine documented design decisions. The full decision log, with explicit rationale and reference to the external sources carrying the theoretical weight (Mayer et al., 1995; McKnight et al., 2002; Lankton et al., 2015; Sollner et al., 2016; NIST, 2023; EU AI Act, 2024; Hollnagel et al., 2006; and others), is presented below. Each mapping was justified by empirical evidence, theoretical grounding, or both.

Categories 1-4

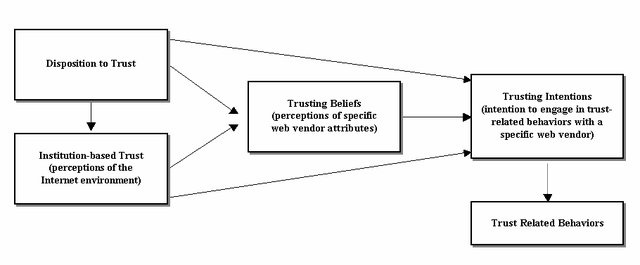

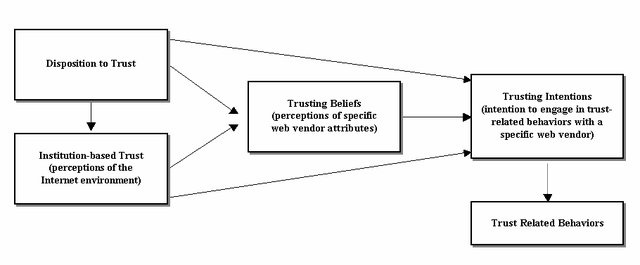

Trustworthiness Beliefs, Dispositional Trust, Institutional Trust, and Trust Intentions became the below-waterline constructs.

Latent psychological states, not directly observable. Faithful to the McKnight et al. (2002) trust typology.

Category 5

Brand and Reputation became Brand, above waterline in the Agency Layer. Grounded in signaling theory (Spence, 1973) and brand-trust research (Chaudhuri and Holbrook, 2001), together with Hoffmann, Lutz, and Meckel (2014), who report that brand cues drive behavioral intentions through a pathway distinct from trusting-beliefs formation.

Category 6

Fair Exchange and Reciprocity became Reciprocity, above waterline in the Agency Layer. Grounded in Blau’s (1964) social-exchange theory and in Hoffmann, Lutz, and Meckel (2014), who report that reciprocity cues have a strong effect on trusting beliefs relative to other cue categories tested. Specific path coefficients should be verified against the primary source; see Limitations.

Categories 7-8

Technical Infrastructure and Social Mechanisms became Technical Trust Infrastructure and Social Trust Mechanisms, above waterline in the Engineering Layer. Following Sollner et al.’s (2016) distinction between technology-mediated and socially-mediated trust.

Category 9

Governance, Resilience, Accountability became an above-waterline Governance Layer. Organized into three sub-dimensions: Adaptive Governance, Organizational Resilience, and Continuous Digital Assurance.

Category 10

Perceived Risk became the Contextual Moderation Layer at the waterline. Risk is a property of the situation, not of the trustor or trustee (Mayer et al., 1995). Extended with four contextual parameters: Risk Magnitude, Cultural Trust Radius, Domain Sensitivity, and User Segment.

Category 11

Affective Trust became Affective Trusting Beliefs (ATB), below waterline. Cognition-based and affect-based trust are empirically distinct constructs that follow different pathways and predict different outcomes (McAllister, 1995; Glikson and Woolley, 2020).

Categories 12-13

Trust Dynamics and Trust Repair became the Dynamic Process Layer, a temporal overlay comprising three processes: Formation, Calibration, and Repair. These are not static constructs but dynamic forces that continuously reshape the iceberg.

Categories 14-15

Distrust and AI-Specific Dimensions were distributed across existing constructs. Distrust was operationalized via the Trust State Vector (tracking trust and distrust independently on each Mayer dimension). AI-specific dimensions were integrated through the dual-lens TB architecture and distributed cues across R, B, TI, and GOV.

Conceptual Coverage Assessment

A critical question for any literature-based classification scheme is: how do we know the corpus was sufficient? This study does not claim theoretical saturation in the Glaserian sense (which would require iterative theoretical sampling driven by ongoing analysis). It reports a conceptual coverage plateau: the point at which additional sources no longer produce new axial categories. The answer lies in theoretical saturation, the point at which additional sources no longer produce new categories.

In this study, category emergence plateaued at source 25 of 34 (Hollnagel, Woods and Leveson, 2006): subsequent sources enriched existing categories but did not produce new ones. At that point, all 15 axial categories had been established. The subsequent 9 sources (Schlicker et al. 2025a/b, Bickmore and Cassell, Zierau et al., Vossing et al., Van Pinxteren et al., Riedl, Szalma and Taylor) contributed exclusively to enriching existing categories with new properties and dimensions, but no new categories emerged.

This trajectory is consistent with the thematic-plateau pattern reported by Guest, Bunce and Johnson (2006), in which new themes typically cease to emerge between 12 and 30 sources. A complementary empirical coverage assessment was performed: the L2 cue taxonomy was applied to a database of 1,500+ real-world trust incidents, showing that the cues are sufficient to describe the diversity of observed trust violations across industries and geographies. This is a coverage check of the taxonomy, not a predictive validation of the framework; the incidents were classified using the same taxonomy, so the check speaks to classificatory adequacy rather than to external predictive validity. Independent predictive validation (structural equation modeling, prospective behavioral studies) is identified as a priority for future work (see Limitations).

The source-by-category emergence matrix documents a conceptual coverage plateau for the 15 categories and 10 constructs with respect to the reviewed corpus. This is evidence of comprehensiveness relative to the literature sampled; it is not a claim of theoretical saturation in the Glaserian sense, nor of external predictive validity.

Trust is not generated through a single mechanism; it arises from the interaction of human perception, technical architectures, organisational safeguards, and institutional infrastructures. To understand how AI systems can be designed and governed responsibly, trust must be conceptualized as a multi-level socio-technical construct that involves human agency, engineering robustness, governance assurance, and institutional legitimacy. Trust-centric design sits at the intersection of these layers, translating structural guarantees into meaningful user experiences.

Trust-centric design serves as a bridge between human psychology, engineering infrastructure, and institutional governance. It translates deep structural assurances (identity, provenance, oversight) into signals that users can understand intuitively, making trust both visible and tangible. Effective trust-centric design requires clarity about authorship, contextual transparency, and visible human oversight. It must ensure that users feel empowered and respected, even when interacting with advanced automated systems.

This integrative perspective highlights that trust cannot be achieved through interface design, infrastructure, or regulation alone. Trust emerges only when the agency, engineering, governance, and institutional layers reinforce one another. When design aligns with verifiable architectures and legitimate governance, digital systems gain both emotional credibility and structural reliability.

The combined framework demonstrates that trustworthy AI requires coordination across three interdependent layers.

Human trust is strengthened when emotional cues align with structural assurances. Engineering trustworthiness is meaningful only when users understand and experience it. Institutional legitimacy is anchored in both broader societal expectations and long-term governance.

Perceived risk is not a construct in the ontology but the environmental moderator that makes the entire model meaningful. In the iceberg metaphor, perceived risk is the water.

Just as seawater surrounds the iceberg and determines what is visible above the surface, the contextual environment surrounds every trust assessment. The water is not uniform. A warm current raises the waterline, exposing more of the iceberg: in high-trust cultures, users accept cues at face value and require less verification. A cold current pushes the waterline down, submerging more of the structure beneath scrutiny: in low-trust environments, users demand verifiable evidence before extending trust. The depth of the water represents the stakes involved. The salinity reflects domain-specific expectations. And the currents represent the processing patterns of different user segments.

The water around the iceberg has four measurable properties that modulate how cues are perceived and weighted:

Risk Magnitude

Cue scrutiny depth. Higher risk means more cues are examined and higher thresholds are required. In low-risk situations (shallow water), most of the iceberg is visible: users do not scrutinize trust cues carefully. In high-risk situations (deep water), users examine every available cue (Mayer et al., 1995; Kim et al., 2008).

Cultural Trust Radius

Which cue categories are weighted. High-trust-radius cultures weight Brand more heavily and accept institutional assurance more readily. Low-trust-radius cultures weight Governance and Technical Trust Infrastructure cues, demanding verifiable evidence over reputation (Fukuyama, 1995; Hofstede, 2001).

Domain Sensitivity

Cue threshold levels. Healthcare and financial services demand higher Governance and Technical Trust Infrastructure cue satisfaction than entertainment or social media (Bart et al., 2005).

User Segment

Cue processing mode. Digital natives use heuristic processing (relying on Brand and design quality); digital immigrants use systematic processing (scrutinizing Reciprocity and Technical Trust cues). Age, technology experience, and digital literacy modulate which cues are detected and how they are weighted (Hoffmann et al., 2014).

With the water properties defined, we can now examine the ice itself. The five above-waterline constructs represent the observable trust signals that organizations can design, deploy, and measure. They are grouped into three architectural layers: the Agency Layer (Reciprocity and Brand, shaped by human experience), the Engineering Layer (Technical Trust Infrastructure and Social Trust Mechanisms, shaped by system design), and the Governance Layer (organizational oversight and assurance). Each construct carries a set of L2 cues that function as specific, actionable trust signals.

The iceberg model suggests five clusters of trust cues that sit at the tip of the iceberg: Reciprocity, Brand, Technical Trust Infrastructure, Social Trust Mechanisms, and Governance cues. Each cluster holds a set of trust signals or trust design patterns that marketing professionals can consider to engender trust. Chapter Two and the discussion of the principal-agent problem highlight the importance of trust cues in online transactions. “Perceptions of a trust cue trigger preestablished cognitive and affective associations in the user’s mind, which allows for speedy information processing” (Hoffmann et al., 2015, 142).

The iceberg framework identifies, for each above-waterline trust construct, a set of trust cues (between 17 and 23 per construct, totaling 124 across all ten constructs). The list was developed by first reviewing existing frameworks from the research literature and then integrating contemporary digital trust considerations. We applied a constant-comparison protocol (Glaser and Strauss, 1967; Charmaz, 2006) to group cues into five distinct constructs, testing construct boundaries so that each cue has a distinct scope within its construct and the set of cues covers the major aspects of digital trust identified in the corpus. This is an internal consistency check, not a claim of formal ontological axiom checking in the Gruber (1993) or Guarino and Welty (2002) sense. This method combines established ideas (such as transparency, warranties, and community moderation) with newer considerations (such as AI disclosures and quantum-safe encryption).

However, the list has limitations. It represents a snapshot in time and may not capture every emerging cue as digital trust evolves. Additionally, while the MECE framework helps clarify categories, some cues (e.g., 3rd party data sharing or sustainability commitments) can naturally span multiple constructs depending on context, and decisions on allocation involve some subjective judgment. This means the list should be seen as a flexible starting point for discussion rather than an exhaustive, immutable taxonomy.

Reciprocity:

Reciprocity is a social construct that describes the act of rewarding kind actions by responding to a positive action with another positive action. The benefits to be gained from transactions in the digital space originate in the willingness of individuals to take risks by placing trust in others who are regarded to act competently, benevolently and morally. A fair degree of reciprocity in the exchange of data, money, products and services reduces user’s concerns and eventually induces trust (Sheehan/Hoy, 2000). A user that provides personal data to an online service – actively or passively –perceives this as an exchange input. They expect an outcome of adequate value. A fair level of reciprocity is reached through the transparent exchange of information for appropriate compensation. The table below shows the most relevant signals or strategic elements that establish positive reciprocity.

THE 20 RECIPROCITY CUES (R01-R20)

R01: Value and Fair Pricing | R02: Exchange Transparency | R03: Accountability and Liability | R04: Terms, Pricing and Subscription Transparency | R05: Warranties and Guarantees | R06: Customer Service and Support | R07: Delivery and Fulfillment Excellence | R08: Refund, Return and Cancellation | R09: Recognition and Rewards | R10: Error and Breach Handling | R11: Dispute Resolution and Mediation | R12: User Education and Guidance | R13: Acknowledgment of Contributions | R14: Micropayments and In-App Transparency | R15: Algorithmic Fairness and Non-Discrimination | R16: Proactive Issue Resolution | R17: Informed Defaults | R18: Data Reciprocity | R19: AI Explanation Reciprocity | R20: Privacy-Value Exchange Visibility

R01: Value & Fair Pricing

A business needs to offer fair reciprocal benefits directly relevant to the data it collects and stores. If the business uses information not necessary to the service being provided, additional compensation must be considered. Because of their bounded rationality, consumers are often likely to trade off long-term privacy for short-term benefits.

Eventually, trust is about encapsulated interest, a closed loop of each party’s self-interest.

Ensuring users/customers receive clear, tangible benefits (value) at a reasonable or transparent cost.

Engender: Users feel respected when transparent pricing aligns with the value delivered.

Erode: Hidden fees, overpriced tiers, or unclear costs can drive user frustration and distrust.

R02: Transparency & Explainability

Fair and open information practices are essential enablers of reciprocity. Users must be able to quickly find all relevant information. This leads to a reduction in actual or perceived information asymmetry. Customer data advocacy can require altruistic information practices.

Disclosing policies, processes, and decision‐making (e.g., algorithms) clearly so users understand how outcomes or recommendations are reached. This includes fairness and transparency regarding 3rd-party data sharing.

Engender: Users appreciate open communication, which reduces suspicion.

Erode: Opaque “black box” operations lead people to suspect manipulation or unfair treatment.

R03: Accountability & Liability

Users expect that access to their data will be used responsibly and in their best interests. If a company cannot meet these expectations or if an unfavourable incident occurs, businesses must demonstrate accountability. This requires processes and organizational precautions that enable quick, responsible responses.

Compliance is either a state of being in accordance with established guidelines or specifications, or the process of becoming so. The definition of compliance can also encompass efforts to ensure that organizations abide by industry regulations and government legislation.

In an age when platforms offer branded services without owning physical assets or employing the providers (e.g., Uber doesn’t own cars and doesn’t employ drivers), issues of accountability are increasingly complex. Transparency and commitment to accountability are increasingly strong indicators of trust.

. Being upfront about who is responsible when things go wrong and having mechanisms in place to take corrective action.

Engender: Owning mistakes and compensating users when appropriate builds trust.

Erode: Shifting blame or hiding mishaps erodes confidence and loyalty.

R04: Terms & Conditions (Legal Clarity)

Standard legal information, such as Terms and Conditions and security and privacy policies, must be made proactively accessible. Users need to be informed about the information collected and used. The consistency of this content over time is an important signal that helps build trust.

Clearly stated user agreements, disclaimers, and legal obligations define the formal relationship between the company and the user.

Engender: Straightforward T&Cs (short, plain language) help users feel informed.

Erode: Long, incomprehensible, or deceptive “fine print” fosters suspicion.

R05: Warranties & Guarantees

Warranties and guarantees support the perception of fair reciprocity and, therefore, signal trustworthiness. Opportunistic behaviour will entail expenses for the agent.

Commitments ensuring quality or functionality of products/services, often with money‐back or replacement policies.

Engender: Demonstrates company confidence in their offering, signaling reliability.

Erode: Denying legitimate warranty claims or offering poor coverage breaks trust.

R06: Customer Service & Support

Pre- and after-sales service, as well as any other touch point that allows a user to contact an agent, is a terrific opportunity to shape the customer experience. Failures in this strategic element are penalized with distrust and unfavourable feedback.

Reliability is relatively easy to demonstrate online. It is critical to respond quickly to customer requests.

Responsive, empathetic help channels (phone, chat, email) that address user questions and problems effectively.

Engender: Timely, helpful support reassures users that the company cares about them.

Erode: Unresponsive or unhelpful support creates frustration and alienation.

R07: Delivery & Fulfillment Excellence

Reliability, speed, and accuracy in delivering digital or physical products/services to end users.

Engender: Meeting (or exceeding) delivery promises confirms reliability.

Erode: Late or missing deliveries, or misleading timelines, undermine user confidence.

R08: Refund, Return & Cancellation Policies

Fair and user‐friendly processes for returns, refunds, or canceling subscriptions.

Engender: Demonstrates respect for user choice, reduces perceived risk.

Erode: Excessive hurdles, restocking fees, or strict no‐refund policies create mistrust.

This trust cue refers to the concept of social capital. This kind of capital refers to connections among individuals – social networks and the norms of reciprocity and trustworthiness that arise from them. The cue is similar to social translucence (please refer to the category “Social Trust Mechanisms”). However, it highlights instead the importance of the collective value rather than the social impact of certain behaviours.

R09: Recognition & Rewards

Loyalty programs, badges, and acknowledgment systems that reward continued engagement and signal that the organization values returning users.

R10: Error & Breach Handling

Transparent, timely communication when things go wrong, combined with clear remediation steps and genuine accountability for failures.

R11: Dispute Resolution & Mediation

Accessible, fair processes for resolving disagreements between users and the organization, including escalation paths and independent mediation options.

R12: User Education & Guidance

Proactive efforts to help users understand how data is used, how services work, and how to make informed decisions about their engagement.

R13: Acknowledgment of Contributions

Recognition of user feedback, content contributions, and community participation as valued inputs rather than free labor.

R14: Micropayments & In-App Transparency

Clear disclosure of costs associated with in-app purchases, premium features, and subscription tiers, avoiding surprise charges or confusing pricing structures.

R15: Algorithmic Fairness & Non-Discrimination

Commitment to ensuring that automated decisions do not systematically disadvantage particular groups based on protected characteristics.

R16: Proactive Issue Resolution

Anticipating and addressing potential problems before users encounter them, rather than waiting for complaints to trigger reactive fixes.

R17: Informed Defaults

Setting default configurations that protect user interests (privacy-preserving, data-minimizing) rather than maximizing organizational data extraction.

R18: Data Reciprocity

Providing users with tangible value derived from the data they share, closing the loop between data provision and benefit delivery.

R19: AI Explanation Reciprocity

When AI systems influence user outcomes, providing proportionate explanations that match the stakes involved in the decision.

R20: Privacy-Value Exchange Visibility

Making the trade-off between personal data provision and service benefits explicit and comprehensible, so users can make informed consent decisions.

Brand

A second powerful signal promoting trusting beliefs is an entity’s brand. A company makes a specific commitment when investing in its brand, reputation, and awareness. Since brand building is a costly endeavor, consumers perceive this signal as very trustworthy. Capital invested in a brand can be considered a stake at risk with every customer interaction and transaction. Whether an investment pays off or is lost depends heavily on a company’s true competency. Strategic elements such as brand recognition, image, and website design should trigger associations in the user’s cognitive system, prompting a feeling of familiarity.

“Brands arose to compensate for the dehumanizing effects of the Industrial Age” (Rushkoff, 2017). They are essentially symbols of origin and authenticity.

THE 18 BRAND CUES (B01-B18)

B01: Brand Ethics and Moral Values | B02: Brand Image and Reputation | B03: Recognition and Market Reach | B04: Familiarity and Cultural Relevance | B05: Personalization | B06: Brand Story and Narrative | B07: Design Quality and Aesthetics | B08: Consistency and Cohesion | B09: Heritage and Longevity | B10: Cultural Impact | B11: Localized Expressions | B12: Purpose and Mission | B13: Branded Experiences | B14: ESG Commitments | B15: Costly Signal Investment | B16: AI Model Provenance | B17: Developer Reputation | B18: Digital Experience Innovation

B01: Brand Ethics & Moral Values

The moral stance a brand publicly claims and consistently upholds (e.g., integrity, fairness, honesty).

Engender: Strong ethical standards reassure users of brand integrity.

Erode: Ethical lapses (cover‐ups, scandals) quickly destroy trust and cause reputational damage.

B02: Brand Image & Reputation

The identity of a company triggers associations that together constitute the brand image. It is the impression in the consumers’ minds of a brand’s total personality. Brand image is developed over time through advertising campaigns with a consistent theme and is authenticated through the consumers’ direct experience.

In the digital age, brands must focus on delivering authentic experiences and get comfortable with transparency.

Overall public perception of the brand’s character, reliability, and standing in the market.

Engender: Consistent positive image fosters user loyalty and pride in association.

Erode: Negative PR or repeated controversies undermine confidence.

B03: Recognition & Market Reach

Brand recognition is the extent to which a consumer or the general public can identify a brand by its attributes, such as its logo, tagline, packaging, or advertising campaign. Frequent exposure has been shown to elicit positive feelings towards the brand stimulus.

Consumers are more willing to rely on large and well-established providers. Digital consumers prefer brands with a broad reach. Search engine marketing is a relevant element that influences a brand’s relevance and reach. Many new business models rely on a competitive advantage in the ability to generate leads through search engine optimization (SEO) and search engine advertising (SEA).

The degree to which the brand is widely known and recognized across regions and demographics.

Engender: Familiarity can reduce perceived risk and enhance trust.

Erode: If scandals or controversies accompany a wide reach, broad exposure amplifies distrust.

B04: Familiarity & Cultural Relevance

Design Patterns & Skeuomorphism: Skeuomorphism makes interface objects familiar to users by using concepts they recognize. Use of objects that mimic their real-world counterparts in how they appear and/or how the user can interact with them. A well-known example is the recycle bin icon used for discarding files.

California Roll principle: The California Roll is a type of sushi developed to familiarize Americans with unfamiliar food. People don’t want something truly new; they want the familiar done differently.

Privacy by Design advances the view that the future of privacy cannot be assured solely by compliance with legislation and regulatory frameworks; rather, privacy assurance must become an organization’s default mode of operation. It is an approach to systems engineering that takes privacy into account throughout the whole engineering process. Privacy needs to be embedded by default throughout the architecture, design, and construction of the processes.

Design Thinking describes a paradigm – not a method – for innovation. It integrates human, business, and technical factors into problem formulation, problem solving, and design. The user (human) centred approach to design has been extended beyond user needs and product specification to include the „human-machine-experience“.

How naturally the brand’s products/services fit into local customs, language, and user contexts.

Engender: Users resonate with solutions tailored to their cultural norms.

Erode: Ignoring cultural nuances can lead to alienation or offense.

B05: Personalized Brand Experience

Digital customers are very demanding about the relevance of a product, service, or piece of information. Mass customization and personalization foster a good customer experience. The ability to process large amounts of data enables individualized transactions and aligns production with pseudo-individual customer requirements.

Through personalization, a customer feels like they are being treated as a segment of one. Service providers can offer individual solutions and thereby increase perceived competency by providing better, faster access to relevant information. Personalization works best in markets with fragmented customer needs.

Tailoring brand touchpoints (marketing, app interactions) so users feel recognized as individuals.

Engender: Users appreciate relevant, personal engagement.

Erode: Over‐personalization or privacy intrusions can feel creepy or manipulative.

B06: Brand Story & Narrative

Ever since the days of bonfires and cave paintings, humans have used storytelling to foster social bonding. Content marketing and storytelling done right are elemental means to engender trust. Well-constructed narratives attract attention and build an emotional connection. As trust in media, organizations, and institutions diminishes, stories offer an underappreciated vehicle for fostering these connections and, eventually, establishing credibility. Attributes of stories that build trust are genuine, authentic, transparent, meaningfu,l and familiar.

The brand’s history, origins, and overarching story that communicates purpose and authenticity.

Engender: A compelling, consistent narrative humanizes the brand and builds empathy.

Erode: Contradictions between stated story and actual practice (greenwashing, etc.) damage credibility.

B07: Design Quality & Aesthetics

The quality of a brand’s digital presence (web, mobile, etc.) can foster brand reputation and enhance brand recognition. High site quality signals that the company has the required level of competence.

Important design qualities include usability, accessibility, and the resulting user experience.

A fundamental paradigm leading the design process is the process of “Privacy by Design”:

Privacy by Design advances the view that the future of privacy cannot be assured solely through compliance with legislation and regulatory frameworks; rather, privacy assurance must become an organization’s default mode of operation (privacy by default). It is an approach to systems engineering that takes privacy into account throughout the whole engineering process.

Privacy needs to be embedded by default throughout the architecture, design, and construction of the processes. The demonstrated ability to secure and protect digital data needs to be part of the brand identity.

Done right, this design principle increases the perception of security. This refers to the perception that networks, computers, programs, and, in particular, data are always protected from attack, damage, or unauthorized access.

The visual identity and user experience design that shape a recognizable “look and feel.”

Engender: High‐quality design suggests professionalism and attention to detail.

Erode: Shoddy, inconsistent, or dated design signals carelessness or lack of refinement.

B08: Brand Consistency & Cohesion

Uniform messages, tone, and imagery across all channels (web, mobile, social media, physical stores).

Engender: Consistency implies reliability and coherence.

Erode: Inconsistent experiences (conflicting statements or design) can confuse and unsettle users.

B09: Heritage & Longevity

The brand’s history, track record, and sustained market presence as evidence of enduring reliability and institutional commitment.

B10: Cultural Impact

The brand’s influence on broader cultural conversations, social movements, or industry standards beyond its immediate commercial footprint.

B11: Localized Expressions

Adaptation of brand messaging, visual identity, and product offerings to resonate with local customs, languages, and cultural expectations.

B12: Purpose & Mission

A clearly articulated organizational purpose that connects commercial activity to broader social or environmental goals, providing users with values-based reasons to trust.

B13: Branded Experiences

Immersive, exclusive, or memorable interactions (events, limited editions, flagship experiences) that deepen emotional connection and create shared identity.

B14: ESG Commitments

Demonstrated environmental, social, and governance commitments backed by measurable targets, transparent reporting, and third-party verification.

B15: Costly Signal Investment

Visible, verifiable investments in brand quality, infrastructure, or community that would be economically irrational for a dishonest actor to make (Spence, 1973).

B16: AI Model Provenance

Transparent disclosure of who developed an AI model, what data it was trained on, and under what governance framework it operates.

B17: Developer Reputation

The track record, public credibility, and ethical standing of the individuals and organizations responsible for building and maintaining the AI system.

B18: Digital Experience Innovation

Demonstrated investment in novel digital interactions (immersive interfaces, accessibility features, cross-platform consistency) that signal technical competence and user-centric design.

Human perception alone cannot sustain digital trust without robust technical foundations. The engineering layer conceptualizes trust as a system property that must be measurable, auditable, and verifiable. Technical trust arises from mechanisms such as identity assurance, provenance tracking, cryptographic guarantees, adversarial evaluation, and resilient system architectures. These mechanisms act as Technical Trust Infrastructure, reducing uncertainty, and as Social Trust Mechanisms, preventing harm and misuse.

Modern AI systems introduce distinctive failure modes, including hallucinations, distributional drift, prompt injection, data leakage, and bias amplification, that require continuous evaluation throughout a system’s lifecycle (Antil, 2025; Amodei et al., 2016). Evidence-based controls such as anomaly detection, model monitoring pipelines, data provenance tracking, and bias audits convert trust from a narrative into a demonstrable system attribute.

Distributed digital ecosystems increasingly depend on decentralized trust infrastructures that provide cryptographically verifiable guarantees. Self-Sovereign Identity (SSI), verifiable credentials, and the Trust over IP (ToIP) stack enable privacy-preserving identity exchange and selective disclosure (W3C, 2021; ToIP, 2022). Standards such as C2PA, which enable content to be cryptographically signed at creation, establish provenance, mitigate deepfake-driven misinformation, and preserve epistemic trust online (C2PA, 2022).

Despite the flexibility of large neural models, many trust requirements, including rule consistency, auditability, and transparent reasoning, cannot be ensured by probabilistic systems alone. This limitation has accelerated the adoption of hybrid AI architectures that combine symbolic reasoning, deterministic rule engines, verifiable credential flows, and knowledge graphs with generative models (Marcus, 2020). Such hybrid approaches combine adaptability with predictability, thereby providing the technical reliability demanded in high-stakes or regulated domains.

Technical Trust Infrastructure

Technical Trust Infrastructure is the strategic element that connects the cues processed by the cognitive system with the base of the iceberg. It interfaces with the construct of institution-based trust. It holds innovative strategic elements in the technological space that influence how consumers perceive structural assurance and situational normality. As outlined in Chapter 1, system complexity in a modern digital world increases on a factorial scale. Increasing system complexity, in turn, requires more decentralized control mechanisms (Helbing, 2015). This is why digital consumers must be given more control over their data. It is a matter of time before decentralized user control becomes a legal requirement.

By reinforcing structural assurance and situational normality, the Technical Trust Infrastructure connects to the institution-based trust at the base of the iceberg. Institution-based trust means the user trusts the institution or system because of its supportive structures and the broader context, even if they have no prior personal interaction with it. For instance, a new user on a platform might not yet trust the individual seller (no prior relationship), but if the platform offers strong structural assurances (e.g., escrowed payments, a money-back guarantee) and everything about the site feels “legit” and normal, the user’s institution-based trust is high. The Technical Trust Infrastructure encompasses “technological framework” elements that create this effect. We can think of it as trust by design: it’s about building the trust layer into the digital environment itself.

It’s important to note how the Technical Trust Infrastructure concept reflects a shift toward designing for trust in modern digital strategy. Many companies now invest in features such as two-factor authentication, user control panels for privacy settings, transparent explanations of AI decisions, and consistent user interface guidelines – all of which can be seen as Technical Trust Infrastructure tools that increase users’ confidence in the system. In summary, the Technical Trust Infrastructure is about embedding trustworthiness into the system’s architecture and user experience, so that even unseen processes (such as security algorithms or data-handling practices) translate into a sense of trust for users. Organizations can foster trust by proactively adopting and implementing effective Technical Trust Infrastructure strategies early and with care.

Industry experts increasingly describe digital trust as having two dimensions: explicit and implicit trust (Tölke, 2024). One hypothesis posits that “digital trust = explicit trust × implicit trust”, suggesting that both factors are essential and mutually reinforcing in creating overall trust. While this equation is more conceptual than mathematical, it conveys the idea that if either explicit or implicit trust is zero, the result (digital trust) will be zero.

Digital Trust = Explicit Trust × Implicit Trust

Explicit trust refers to trust that is consciously and deliberately fostered or signaled. It includes any action or information deliberately provided to engender trust. For example, when a platform verifies users’ identities or when a user sees a verified badge or signed certificate, those are explicit trust signals. In access management terms, explicit trust might mean continuously verifying identity and credentials each time before granting access, essentially a “never trust, always verify” approach. An example of explicit trust in practice is a reputation system on a marketplace: a buyer trusts a seller because the seller has 5-star ratings and perhaps a “Verified Seller” badge. That trust is explicitly cultivated through visible data. Another example is an AI system that provides explanations or certifications; a user might trust a medical AI’s recommendation more if an explanation is provided and if the AI model is certified by a credible institution (explicit assurances of trustworthiness).

Implicit trust, on the other hand, refers to trust that is built indirectly or in the background, often without the user’s conscious effort. It stems from the environment and behavior rather than overt signals. Implicit trust typically includes the technical and structural reliability of systems. For instance, a user may not see the cybersecurity measures in place, but if the platform has never been breached and consistently behaves securely, the user develops an implicit trust in it. As one industry report noted, “Implicit trust includes cybersecurity measures to protect digital infrastructure and data from threats such as hacking, malware, phishing and theft” (Tölke, 2024). Users generally won’t actively think “I trust the encryption algorithm on this website,” but the very absence of security incidents and the seamless functioning of security protocols contribute to their trust implicitly. Likewise, consistent user experience and adherence to norms (which tie back to situational normality) build implicit trust. Users feel comfortable and at ease because nothing alarming has happened.

In the field of recommender systems, the distinction between explicit and implicit trust has been studied to improve recommendations (Demirci & Karagoz, 2022). Explicit trust can be something like a user explicitly marking another user as trustworthy (as was possible on platforms like Epinions, where you could maintain a Web-of-Trust of reviewers). Implicit trust can be inferred from behavior. If two users have very similar tastes in movies, the system might infer a level of trust or similarity between them, even if they never explicitly stated it. Demirci & Karagoz have found that these two forms of trust information have “different natures, and are to be used in a complementary way” to improve outcomes (2022, 444). In other words, explicit trust data is often sparse but highly accurate when available (e.g., an explicit positive rating means strong declared trust). In contrast, implicit trust can fill in the gaps by analyzing behavior patterns.

Applying this back to digital trust broadly: Explicit trust × Implicit trust means that to achieve a high level of user trust, a digital system must provide tangible, visible assurances (explicit cues) and invisible, underlying reliability (implicit factors). If a system has only implicit trust (e.g., it’s very secure and well-engineered) but provides no explicit cues, users might not realize they should trust it. Users may feel uneasy simply because there are no familiar signals, even if it’s trustworthy under the hood. Conversely, if a system has many explicit trust signals but lacks actual implicit trustworthiness, users may be initially convinced, but that trust will erode quickly if something goes wrong. The combination is key: users need to see reasons to trust and also experience consistency and safety that justify that trust.

The Technical Trust Infrastructure component of the Iceberg Model, together with the Brand and Reciprocity cues, can be viewed as the embodiment of this dual approach. It provides the technological trust infrastructure (implicit) and often also interfaces with user-facing elements (explicit), such as interface cues or processes that make those assurances evident to the user. For instance, consider an online banking website. The Technical Trust Infrastructure elements would include back-end security systems, encryption, fraud detection (implicit trust builders), and front-end signals like displaying the padlock icon and “https” (encryption explicit cue), showing logos of trust (FDIC insured, etc.), or requiring the user’s OTP (one-time passcode) for login. When done right, the user both feels the site is safe (everything behaves normally and securely) and sees indications that it is trustworthy. In this way, the Technical Trust Infrastructure aligns with the idea that digital trust is the product of explicit and implicit trust factors working together.

It’s worth noting that in cybersecurity architecture, there has been a shift “from implicit trust to explicit trust” in recent years, epitomized by the Zero Trust security modelfedresources.com. Zero Trust means the system assumes no implicit trust, even for internal network actors – everything must be explicitly authenticated and verified. This approach was born of the realization that implicit trust (such as assuming anyone within a corporate network is trustworthy) can be exploited. While Zero Trust is about security design, its rise illustrates a broader trend: relying solely on implicit trust is no longer sufficient. Systems must continually earn trust through explicit verification. However, the end-user’s perspective still involves implicit trust; users don’t see all those checks happening, they simply notice that breaches are rare, which again builds their quiet, implicit confidence. Thus, even in a Zero Trust architecture, the outcome for a user is a combination of explicit interaction (e.g., frequent logins, multifactor auth prompts) and implicit trust (the assumption that the system is secure by default once those steps are done).

In summary, the hypothesis that digital trust equals explicit trust multiplied by implicit trust highlights a crucial principle: trust-by-design must operate on both the visible and invisible planes. It’s not a literal equation for computing trust, but a reminder that product designers, security engineers, and digital strategists need to address human trust at both levels: by providing transparent, deliberate trust signals and by ensuring robust, dependable system behavior.

Note: The multiplicative formula is a conceptual illustration, not an empirically validated equation. The relationship between explicit and implicit trust is more nuanced than simple multiplication. The Contextual Moderation Layer (Section 4) provides a more rigorous framework for understanding how contextual factors modulate trust formation.

As digital ecosystems evolve, a growing chorus of experts argues that true digital trust will increasingly hinge on decentralized user control. In traditional, centralized models, users had to place significant trust in large institutions or platforms to serve as custodians of data, identity, and security. This aligns with what we discussed as institution-based trust (trusting the platform’s structures). However, recurring scandals have eroded confidence and exposed a key limitation: when a single entity holds all the keys (to identity, data, etc.), a failure or abuse by that entity can shatter user trust across the board. Empowering users with more direct control is emerging as a way to mitigate this risk and distribute trust.

One area where this philosophy is taking shape is digital identity management. The conventional approach to digital identity (think of how Facebook or Google act as identity providers, or how your data is stored in countless company databases) is highly centralized. Now, new approaches such as decentralized and self-sovereign identity (SSI) are shifting that paradigm.

In an SSI system, you might have a digital identity wallet that stores credentials issued to you (e.g., a digital driver’s license or a verified diploma). These credentials are cryptographically signed by issuers but are ultimately controlled by the user. By removing centralized intermediaries, users no longer need to implicitly trust a single middleman for all identity assertions; trust is instead placed in open protocols and cryptographic mathematics.

From a user’s perspective, decentralized identity and similar approaches can significantly enhance trust. First, privacy is improved because the user can disclose only the necessary information, or none at all (using techniques like selective disclosure or zero-knowledge proofs), rather than handing over full profiles to every service. Second, there’s a sense of empowerment: the user owns their data and keys. This aligns with rising consumer expectations and data protection regulations (such as GDPR) that promote greater user agency.

A Technical Trust Infrastructure element in the modern sense might be a platform’s integration with decentralized identity standards. By doing so, the platform signals structural assurance in a new form: no single party (including the platform itself) can unilaterally compromise user identity, because identity is decentralized. It also contributes to situational normality over time, as these practices become standard and users become familiar with controlling their data.

Beyond identity, the theme of decentralization appears in discussions of trustworthy AI and data governance. For example, using decentralized architectures or federated learning can assure users that their data isn’t pooled on a central server for AI training, but rather stays on their device (enhancing implicit trust in how the AI operates). Similarly, blockchain technology is often touted as a “trustless” system. It aims to eliminate the need for blindly trusting a central intermediary. Trust is instead placed in a distributed network with transparent rules (the protocol code) and consensus mechanisms. When we say “trustless” in this context, it means the Technical Trust Infrastructure is the network and code itself. If well-designed, users implicitly trust the blockchain system due to its transparency and immutability, and explicit trust is further enhanced by the ability to verify transactions publicly.

It should be noted that decentralized approaches introduce their own complexities. Not every user wants, or is able, to manage private keys securely; for example, doing so introduces a new level of personal responsibility. A balance is needed: usability (which contributes to situational normality) must be designed hand in hand with decentralization. This is again where the Technical Trust Infrastructure plays a role: innovative solutions like social key recovery (where a user’s friends can help restore access to a wallet) or hardware secure modules in phones (to safely store keys) are being developed to make decentralized control viable and friendly. These are technological adaptations to social needs, encapsulated well by the Technical Trust Infrastructure idea.

In summary, the push for decentralized user control is a response to the erosion of trust in heavily centralized systems. By distributing trust and giving individuals more control over their identity and data, the structural assurance of digital services can increase, paradoxically by removing reliance on any single structure and instead trusting open, transparent frameworks. The implication for digital trust is profound: future trust signals might be less about “trust our company” and more about “trust this open protocol we’ve adopted” and “you are in charge of your information.”

If the last decade was about platforms asking users to trust them (often implicitly), the coming years may be about platforms empowering users so that less blind trust is needed. This evolution supports a more sustainable, user-centric approach to digital trust, where control and confidence grow together.

THE 20 TECHNICAL TRUST INFRASTRUCTURE CUES (TI01-TI20)

TI01: Model Cards & Training Documentation | TI02: Hallucination Detection & Mitigation | TI03: UX Familiarity & Interface Conventions | TI04: Adaptive Communication & Responsiveness | TI05: AI System Self-Disclosure | TI06: Trust Maturity Indicators | TI07: User Control & Agency | TI08: Privacy Management & Consent Mechanisms | TI09: Identity & Access Management | TI10: Trustless Systems & Smart Contracts | TI11: Privacy-Enhancing Technologies | TI12: Adaptive Cybersecurity & Fraud Detection | TI13: Auditable Algorithms & Open-Source Frameworks | TI14: Federated Learning & Decentralized Models | TI15: Trust Score Systems & Ratings | TI16: Data Portability & Interoperability | TI17: Trust Influencers (Change Management) | TI18: Generative AI Disclosures | TI19: Algorithmic Recourse & Appeal | TI20: Data Minimization & Privacy-Preserving Analytics

TI07: User Control & Agency

User control is a foundational requirement of trustworthy AI, recognized across regulatory frameworks from the EU AI Act to the NIST AI Risk Management Framework. Shneiderman (2020) argues that human agency in AI interactions is not merely a design preference but a prerequisite for accountability. When users can meaningfully intervene in, override, or opt out of automated decisions, they retain a sense of authorship over outcomes. Without such affordances, systems risk producing learned helplessness and eroding the psychological contract between user and provider.

Mechanisms that provide users with meaningful control to inspect, override, pause, or exit automated processes.

Engender: Providing granular controls (preference dashboards, override toggles, opt-out flows) signals respect for user autonomy and reinforces the perception that the system serves the user rather than constraining them.

Erode: Systems that lock users into automated pipelines with no override path generate frustration and distrust, particularly when outcomes are consequential. Removal of previously available controls is perceived as a breach of implicit agreement.

TI09: Identity & Access Management

Identity and access management (IAM) forms the perimeter through which trust is technically enforced. As Sandhu et al. (1996) established with role-based access control, the principle of least privilege ensures that actors interact only with the resources their role demands. In AI systems, IAM extends beyond human users to encompass service accounts, model endpoints, and automated agents. The integrity of IAM directly determines whether trust assumptions encoded in policy translate into runtime reality.

Robust authentication, authorization, and credential management practices governing access to AI system components, data pipelines, and decision outputs.

Engender: Multi-factor authentication, fine-grained role definitions, and auditable access logs demonstrate that system operators take boundary enforcement seriously, strengthening institutional trust.

Erode: Weak credential policies, overly broad permissions, or unmonitored service accounts create attack surfaces that, once exploited, destroy trust catastrophically and publicly.

TI08: Privacy Management & Consent Mechanisms

Privacy management in AI systems must go beyond regulatory checkbox compliance toward what Nissenbaum (2010) calls “contextual integrity,” where information flows respect the norms of the context in which data was originally shared. Consent mechanisms are the user-facing expression of this principle. The GDPR requirement for freely given, specific, informed, and unambiguous consent sets the legal floor, but genuine trust requires consent architectures that are comprehensible, revocable, and granular enough to match the complexity of modern data processing.

Granular and accessible mechanisms through which users grant, modify, and withdraw consent for data collection and processing by AI systems.

Engender: Layered consent interfaces that let users understand what they are agreeing to, combined with simple revocation paths, build confidence that the organization respects data subjects as partners rather than resources.

Erode: Dark patterns in consent flows, buried opt-out mechanisms, or consent that is technically revocable but practically irreversible signal that privacy commitments are performative, triggering regulatory scrutiny and user backlash.

TI02: Hallucination Detection & Mitigation

Hallucination, the generation of plausible but factually incorrect outputs, represents one of the most distinctive trust threats posed by large language models and generative AI systems. Ji et al. (2023) provide a comprehensive taxonomy distinguishing intrinsic hallucinations (contradicting source material) from extrinsic ones (unverifiable claims). The challenge is compounded by the fluency of hallucinated outputs: users often cannot distinguish confident fabrication from grounded reasoning without external verification infrastructure.

Detection, flagging, and mitigation strategies for hallucinated or confabulated outputs across generative AI components.

Engender: Retrieval-augmented generation, confidence calibration, citation linking, and explicit uncertainty markers demonstrate that the system actively guards against ungrounded claims, reinforcing user confidence in output reliability.

Erode: Undetected hallucinations in high-stakes contexts (medical, legal, financial) cause direct harm and, once discovered, permanently damage credibility. A single well-publicized hallucination incident can define public perception of an entire product category.

TI13: Auditable Algorithms & Open-Source Frameworks

Auditability is the technical precondition for accountability. Raji et al. (2020) demonstrate that algorithmic audits, whether internal or external, require access to model architecture, training procedures, and evaluation criteria. Open-source frameworks lower the barrier to independent scrutiny by enabling third-party reproduction and validation. The tension between proprietary protection and public accountability is a central challenge in AI governance, and the degree to which organizations resolve it in favor of transparency directly shapes stakeholder trust.

Algorithmic decision systems designed to permit independent audit, supported by open-source tooling, reproducible evaluation pipelines, or structured access for external reviewers.

Engender: Publishing model weights, evaluation benchmarks, or structured audit access programs signals confidence in the system and invites the kind of external validation that regulators and civil society organizations increasingly demand.

Erode: Opacity in algorithmic decision-making, particularly when combined with consequential outcomes, invites suspicion. Refusing third-party audit requests or obstructing reproducibility suggests that the organization has something to hide.

TI10: Trustless Systems & Smart Contracts

The concept of “trustless” systems, architectures that enforce commitments through cryptographic proof rather than institutional reputation, originates in distributed ledger technology but has broader implications for AI governance. Werbach (2018) distinguishes between trust in institutions, trust in intermediaries, and trust in code, arguing that smart contracts shift enforcement from discretionary judgment to deterministic execution. In AI contexts, trustless mechanisms can guarantee that data-use agreements, model-access policies, or royalty distributions are honored without requiring faith in any single party.

Cryptographic enforcement mechanisms, smart contracts, or verifiable computation that reduce reliance on institutional trust for compliance assurance.

Engender: Immutable audit trails, automated policy enforcement, and verifiable computation proofs remove the need for stakeholders to “take your word for it,” converting trust from a social judgment into a mathematical guarantee.

Erode: Promising trustless guarantees while retaining backdoor override capabilities, or deploying smart contracts with unaudited vulnerabilities, creates a false sense of security that collapses spectacularly upon discovery.

TI18: Generative AI Disclosures

As generative AI outputs become indistinguishable from human-produced content, disclosure obligations move from ethical nicety to regulatory mandate. The EU AI Act explicitly requires that AI-generated content be labeled as such in specified contexts. Hancock et al. (2020) show that awareness of AI involvement materially changes how recipients evaluate message credibility. Disclosure is not merely about labeling; it encompasses provenance tracking, watermarking, and metadata that allows downstream consumers to assess the origin and reliability of content they encounter.

Clear, consistent, and tamper-resistant disclosure of synthetic origin and the conditions under which AI-generated outputs were produced.

Engender: Proactive disclosure through visible labels, embedded metadata, and content provenance standards (such as C2PA) demonstrates respect for the recipient’s right to know and positions the organization as a responsible actor in the generative AI ecosystem.

Erode: Deploying generative outputs without disclosure, or making disclosures easily removable, enables misuse and exposes the organization to regulatory penalties, reputational damage, and complicity in misinformation.

TI19: Algorithmic Recourse & Appeal

Algorithmic recourse, the ability of individuals to obtain a different outcome by changing actionable input features, is formalized by Ustun et al. (2019) and Karimi et al. (2021) as both a fairness desideratum and a practical right. Appeal mechanisms extend recourse from the technical layer (what inputs to change) to the institutional layer (who to petition and under what process). The EU AI Act mandates human oversight and contestability for high-risk systems, making recourse not optional but legally required in many jurisdictions.