Chapter 1: Learn how pervasive consumer concerns about data privacy, unethical ad-driven business models, and the imbalance of power in digital interactions highlight the need for trust-building through transparency and regulation.

Chapter 8: Learn how AI’s rapid advancement and widespread adoption present both opportunities and challenges, requiring trust and ethical implementation for responsible deployment. Key concerns include privacy, accountability, transparency, bias, and regulatory adaptation, emphasizing the need for robust governance frameworks, explainable AI, and stakeholder trust to ensure AI’s positive societal impact.

The vast quantities of data that digitally active individuals generate on a daily basis are truly remarkable. This data has become one of the most valuable resources of the digital age, driving the growth of both modern and traditional business models. In fact, the explosion of start-up communities worldwide is largely fueled by the promise of harnessing data to create innovative products and services. However, while data’s power is undeniable, it also brings with it significant challenges—especially regarding how that data is collected, used, and shared.

In contrast to the motivating potential of data, consumers have become increasingly aware that when they are not directly paying for a product or service, they themselves, or more specifically their personal information, are often the actual product. This growing awareness has sparked widespread concerns about privacy, surveillance, and data misuse. These concerns are not simply theoretical; they have practical implications for businesses that rely on user data. As pointed out by Morey et al. (2015), many data-gathering practices—especially those that are opaque or intrusive—are short-sighted and fail to build the trust that is crucial for long-term customer relationships. The challenge of striking a balance between leveraging data for business growth and respecting consumer privacy has never been more pressing.

The Consumer Data Dilemma

Today, consumers are more informed and skeptical than ever before. Data breaches, misuse of personal information, and opaque data collection practices have heightened anxiety about the safety of digital interactions. Consequently, businesses must consider consumer concerns about data privacy as a central component of their marketing and digital strategies. This goes beyond mere compliance with regulations like the General Data Protection Regulation (GDPR) in Europe or the California Consumer Privacy Act (CCPA) in the U.S.; it requires a fundamental shift toward ethical data practices and transparency.

For many businesses, particularly those in the digital space, the notion of data as a valuable asset must be paired with a renewed focus on consumer trust. The need for businesses to become trusted partners has never been more urgent. With new digital services and products emerging daily, businesses that fail to foster trust may struggle to survive in an increasingly competitive and data-conscious market. The ability to engage in open, honest, and mutually beneficial dialogue with customers will be a defining characteristic of successful companies in the future.

Trust as a Key Business Differentiator

At the heart of this challenge lies trust. Trust is no longer a vague, abstract concept; it is a measurable and actionable asset that shapes consumer behavior. A trusted brand not only attracts customers but also retains them. In the age of digital disruption, where new players continuously enter the market with innovative ideas, trust is the differentiating factor that will set the winners apart from the rest. In this context, trust isn’t just about maintaining a good reputation—it’s about creating an environment in which customers feel safe, valued, and in control of their data.

Moreover, as consumers’ expectations evolve, companies must move beyond transactional relationships and focus on cultivating long-term partnerships. This means engaging with customers in ways that are transparent, empathetic, and aligned with their values. Brands that prioritize customer-centric practices—such as data transparency, security, and control—are more likely to build the trust necessary for sustainable success.

A significant part of this transformation lies in the ability to communicate effectively about data collection and usage. Rather than adopting shrouded, often exploitative approaches, companies need to practice open data dialogues. This involves explaining how customer data will be used, offering users clear choices, and ensuring they understand the tangible benefits that arise from sharing their information. In return for trust, businesses should aim to offer customers value, whether through improved products, personalized experiences, or guarantees about privacy protection. This reciprocal relationship between businesses and consumers will be key to establishing enduring partnerships that go beyond mere transactions.

A Trust-Centric Strategy for the Future

In an era where digital products and services are often just a click away, businesses must prioritize trust-building in all aspects of their digital strategy. From the first point of contact to post-purchase support, every interaction between a company and its customers should reflect a commitment to transparency and ethical data practices. Companies that make trust a central element of their brand promise—through clear privacy policies, ethical marketing practices, and data governance—will not only meet growing consumer demand for privacy and security but also position themselves as leaders in a new era of business ethics.

The winners of tomorrow will be those businesses that not only innovate but do so in a way that builds lasting, transparent relationships with their customers. As consumers continue to demand greater accountability from the companies they interact with, the ability to earn and maintain trust will determine who thrives in the digital economy—and who risks being left behind.

This article will explore how companies can make the concept of “digital trust” more tangible by presenting a practical, project-based approach to building and nurturing trust in the digital landscape.

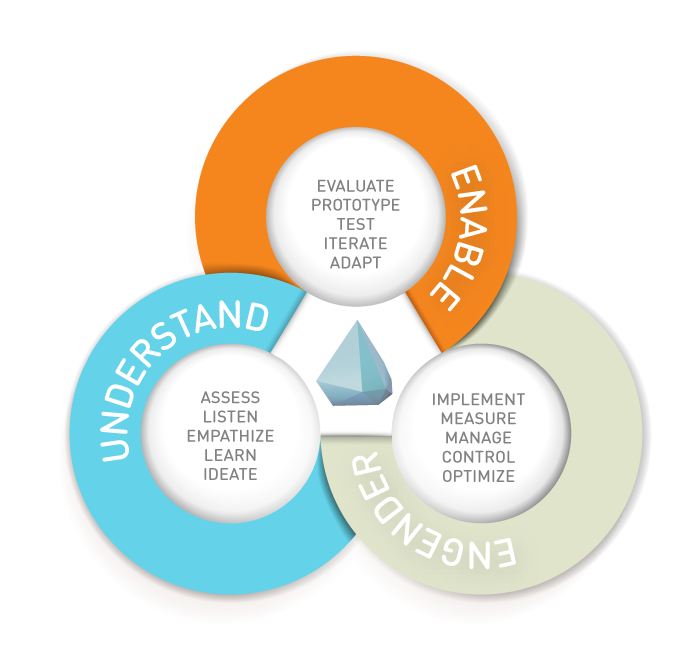

Like all strategic concepts and frameworks, the approach described hereafter can not be regarded as precise or explicit operation instructions. There is no standard process for identifying and solving trust issues. However, in order to leverage digital trust as a currency and as a key differentiator for future success, companies can build and practice three major business capabilities.

As a management consultant, I have conceptualised and implemented numerous digital strategy projects that significantly impacted digital trust. Regardless of the specific focus—whether developing a Big Data strategy or rolling out a large-scale, global social media monitoring solution—designing a digital strategy should always follow three key steps. These steps act as sub-capabilities, all contributing to the overarching goal of engendering trust. The suggested project approach to engendering trust helps make this abstract topic more tangible and actionable.

First, businesses need to get a good understanding of the issues and potential outcomes at hand. Paying attention to maturity levels in customer data differs dramatically from company to company; organizations would be wise to listen and learn first.

Second, companies must build the necessary foundations to enable trust cues successfully. This involves identifying and effectively evaluating the options in the form of use cases and rapidly testing these cases in prototypes.

Thirdly, the proposed project approach suggests implementing a governance and operating structure to manage and optimise initiatives and engender trust. In the last section of this article, the three key capabilities will be mapped to single activities that constitute an exemplary project.

1. Understanding trust issues and opportunities

Engendering the belief of competency, benevolence and integrity constitutes a poorly bounded problem. These are problems that are essentially unique. Solutions to these “wicked” problems are not true-or-false, but better or worse. They therefore require a dynamic approach to problem solving. Furthermore, they require an effective approach that ultimately identifies often hidden problems. Last but not least, they require an empathy-based, human centered process that focuses on actual user needs. All of these requirements are met with the Design Thinking methodology by the Stanford Design School.

The capability of “understanding” includes, assessing the current situation, listening to your customers, empathizing with them to understand their problems, learning from all theses insights and ultimately ideating to find initiatives that improve the company’s situation. As an outcome of this step, a team of employees with mixed skills – including business, design and technology skills – will develop a set of ideas that can be further evaluated in a next step and eventually implemented in the last phase of the proposed project approach. The iceberg.digital® model can help to facilitate the ideation process and to identify promising initiatives.

“By outsourcing our thinking to Big Data, our ability to make sense of the world by careful observation begins to wither, just as you miss the feel and texture of a new city by navigating it only with the help of a GPS” (Madsbjerg, Rasmussen, 2014).

This primary capability is essential because observing what real customers do and how they interact with your brand gives you clues about what they think and feel. Empathy is the centerpiece of a human-centered design process. Businesses must be able to find their customers’ topics of concern and therefore to correctly understand their situation. One strategy would be to collect data from traditional and digital sources inside and outside the company and use this data lake as a source for ongoing discovery and analysis. Although advanced analytics can support this step, businesses should rely rather on “thick” than on “big data”. Whereas Big Data can give insights into customer behavior on a massive scale, Thick Data aims to reveal underlying motivations, intentions and emotions.

Dealing with Thick Data includes a complex range of primary and secondary research approaches that require empathy and mindfulness of the observer. Martin Lindstrom, a popular brand management consultant who recently published an interesting collection of use cases for this kind of analysis introduced the term “small data” for the same phenomenon (2016 a). His case studies nicely illustrate the importance of Thick Data. Here an illustrious example: After nearly collapsing in the early 2000’s, Jørgen Vig Knudstorp, CEO of the Danish Lego firm, decided to engage in a major qualitative research project. Instead of analyzing a vast amount of quantitative data, researchers actually observed playing children and their environment. A very small detail unveiled an important fact: Researchers were able to conclude that children were still passionate about the “play experience” and the process of playing (see Lindstrom, 2016 b). Rather than the instant gratification of preassembled toys like action figures, children obviously still value the experience of imagining and creating. This insight led the company back to its traditional building blocks and once again to increasing revenues.

Tools for Identifying Challenges and Opportunities

We recommend beginning with the Value Proposition Canvas, a practical framework for converting initial concepts into concrete use cases (Osterwalder, Pigneur, Bernarda, & Smith, 2014). This tool starts by mapping out end-user expectations, identifying their challenges, and pinpointing what satisfies them. By highlighting how a particular offering alleviates user pain points and delivers added benefits, the Value Proposition Canvas enables organizations to identify the most promising use cases swiftly. Consequently, this approach guides strategic decision-making and product development efforts to maximise user value.

Organizations can systematically transfer user needs, challenges, and desired outcomes into the Trust Strategy Canvas by leveraging the insights derived from the Value Proposition Canvas. This integration ensures that trust-building efforts align with genuine user concerns and expectations, ultimately fostering stronger user engagement and loyalty (Morgan & Hunt, 1994).

The Iceberg Trust Canvas and the Trust Map by iceberg.digital are licensed under CC BY-SA 4.0

The Trust Strategy Canvas is a structured framework that assists organizations in identifying, analyzing, and executing strategies to build and maintain user trust (McKnight, Choudhury, & Kacmar, 2002). It serves as a platform for brainstorming trust initiatives, managing potential risks, and ensuring alignment between organizational goals and user expectations.

To utilize this canvas, teams collaboratively populate each section, beginning with user insights and moving toward actionable strategies and measurable metrics. In doing so, they uncover gaps, prioritize initiatives, and confirm that trust-building measures are both feasible and impactful (Gefen, Karahanna, & Straub, 2003). This approach is particularly valuable in digital settings, where user engagement and loyalty are heavily influenced by trust. By emphasizing both visible signals—such as interface cues—and deeper cognitive factors, the Trust Strategy Canvas fosters innovation driven by trust-centric principles (McKnight et al., 2002).

The Trust Map outlines a summary of the key elements discussed in previous chapters. It illustrates the iceberg trust framework that categorizes trust into visible cues (e.g., reciprocity, brand signals, social protections) and hidden cognitive constructs (e.g., institutional safeguards, individual trust disposition).

This tool can be used to identify gaps in trust-building efforts, design user-centric strategies, and mitigate risks associated with digital interactions. It is particularly useful for organizations navigating trust challenges in AI, data management, and user experience design.

Organizations building relationships to secure access to customer data for potential AI applications face the challenge of maintaining stakeholder trust while advancing technological capabilities. Following the DUCAR methodology (Define-Understand-Collect-Analyze-Realize) proposed by Schäfer et al. (2020), successful implementations of such initiatives require systematic evaluation across business impact and technical feasibility dimensions.

High-impact trust-building initiatives demonstrate clear value attribution, stakeholder involvement, and robust governance frameworks (Lee et al., 2022). Following Bächler et al.’s (2020) structured approach, the success potential of such use cases can be evaluated using a modified BCG matrix that considers both implementation feasibility and business impact. The viability of implementing technology-driven initiatives, particularly in artificial intelligence, depends on two critical elements: the financial investment required and the anticipated value generated from successful deployment (Bouhouras et al., 2009).

The bubble size of an initiative could represent the “Data Readiness” (based on data availability, quality and infrastructure maturity) or “Implementation Maturity” (based on technical expertise available, infrastructure readiness, and resource availability) of each use case.

For effective implementation, organizations should follow an iterative, process-oriented approach combining Design Thinking for use case identification with agile methodologies like SCRUM for realization (Sutherland, 2014; Brown & Katz, 2019). Critical success factors include continuous stakeholder feedback and system refinement through structured frameworks like the Cross-Industry Standard Process for Data Mining (CRISP-DM) defined by Chapman et al. (2000).

Assessing the current situation requires not only a mindful observation of customers and their interaction with the company but also a close look inside the organisation. Before engaging on any strategic work, the business needs to know the answer to “why?”. Then, conceive plans for the “what?” and the “how?”. It is critical to align all trust strategy measures to clearly articulated business objectives.

Companies can build a value tree that clearly structures potential value drivers and outlines objectives” (Porter, 1985; Brandenburger & Nalebuff, 1995; Bowman & Ambrosini, 2007). The value tree approach enables organizations to systematically decompose and analyze strategic value creation by identifying interconnected value sources and strategic objectives (Amit & Zott, 2001). Such value trees visually link value drivers with a key financial metric such as the Return On Invested Capital (Koller, 1994). They help to understand and communicate which strategic elements actually drive the financial result of the company. Click on the button below to access an illustrative example generated with Claude.

2. Enabling the organisation for trust creation

The capability of “enabling” includes objectively evaluating use case ideas, implementing them into prototypes and following a highly iterative process of testing and adapting these solutions.

One of the most popular reasons why data-driven projects fail, is that the organization’s maturity level and therefore readiness to be guided by data do not fit the plans. It is critical to prepare the organisation and to establish a culture that is open for business insights gained from data. Obviously, this requires buy-in and a strong commitment from top management. One procedure that has shown itself to be effective is to engage management in a workshop session that translates predefined business objectives into guidelines. Whereas business objectives are strongly tied to existing strategic objectives as well as the nature of the business, guidelines represent the organization’s interpretation of these objectives and its intent on how to reach the objectives. Ultimately, solid guidelines form a code of conduct that helps everyone involved to decide how to act and react.

In order to establish a corporate culture that embraces data as a guiding instance, the design and prototype team needs to be truly interdisciplinary. Depending on the nature of the initiatives to be started, include representatives from different business functions in your ideation and scrum teams. Mix people from customer-facing functions, such as marketing, sales and product owners, with functions that indirectly influence customer interaction, such as IT and customer service. This mix of perspectives takes account the fact that trust as a complex psychosocial construct is always influenced by a broad set of factors. The perception of trust is, for example, highly influenced by the way data is collected, the nature of the data collected itself and the way data is ultimately used by an organisation (Nguyen et. al., 2013). Furthermore, the clues that build trust as described in the iceberg model differ in their effectiveness depending on the target group. Whereas a strong brand, for example, is highly effective for “digital natives” (younger, experimental digital customers born after 1980) and “naturalized digitals” (middle-aged, highly educated users), it shows less of an effect for “digital immigrants” (older, mostly passive users). The later audience, however, gives more weight to reciprocity – to a transparent exchange of information for appropriate compensation (Hoffmann et al., 2014). Please visit the dedicated webpage on www.iceberg.digital for more details on how the consumer’s mind works and how trust is built.

As a result of the first capability described in this article, the understanding of trust issues and opportunities, a set of ideas has been created using design thinking techniques. These ideas need to be translated into use cases. Use cases show the aim and the subsequent objectives of a solution and the assigned actors by expressing a list of steps and interactions among them towards a common goal (Matz, Germanakos, 2016). It is recommended to keep these cases as simple as possible; they must be readable and understandable by all project stakeholders, sponsors and the end-users.

Designing behavioral interventions

Research in behavioural economics clearly indicates that humans don’t always act rationally, as traditional economics implies. Digital transformation makes this fact even more transparent. Our brain is just not made to cope with the speed technologies are about to disrupt markets, politics and society. Our decision-making is prone to biases. And that’s ok. These imperfections are part of an efficient brain design that eventually allowed humans to thrive in evolution.

Businesses can support the customer’s decision-making process by benevolently intervening. With knowledge of effective trust cues, such behavioural interventions can help customers make the “trust leap”.

We differentiate two types of interventions that can successfully shape the customer’s choice architecture: nudges and rational overrides.

Nudges

Nudges propose positive reinforcement and indirect suggestions as ways to influence the behavior and decision making of groups or individuals.

Rational overrides

A rational override is a small moment of intentional friction that attempts to influence people’s behaviour or decision-making by intervening automatic thinking and activating reflective conscious thinking.

Usually, this process so far produces a colorful bouquet of interesting ideas. A major next challenge is to apply an effective and most necessarily objective evaluation logic to prioritize the cases for further implementation in prototypes. Far too often the ideas originating from politically important business stakeholders are preferred to other cases that would yield a better result. All use cases should be equally evaluated along dimensions such as complexity (or feasibility, or cost), expected benefit and strategic fit. In addition, the guidelines discussed previously, as well as the provisions from the governance model, need to be considered to build a shortlist of the most promising use cases.

3. Engendering digital trust

The final capability that can significantly foster digital trust is the ability to make prototypes productive, effectively manage a portfolio of ideas, and continually optimize initiatives. This approach not only drives innovation but also builds trust over time, a process that is essential for sustaining customer relationships in a digital world. Trust is not an immediate outcome but a gradual process that is shaped with every touchpoint across the customer lifecycle. Companies must actively manage and iterate on their roadmap of trust-building initiatives. Each interaction—whether through marketing, customer service, or product usage—affects the perception of trust, and feedback from these interactions should be carefully considered for continuous improvement.

The Iterative Nature of Trust

Trust is not a static concept; it evolves with every engagement and, as such, it is critical for companies to recognize that trust-building is highly iterative. What works at one stage in the customer journey may need to be adjusted as customer expectations, technological capabilities, and societal norms change. This means companies must stay agile, refining their trust strategies based on real-time data and feedback loops. Understanding what works—and what does not—is essential for avoiding unintended consequences that could undermine trust.

The Challenge of Measuring Trust

One of the major challenges businesses face is the lack of an industry-wide standard for measuring trust. While many different approaches and tools exist to monitor digital trust, there is no universally accepted framework that captures its complexity. As illustrated below, the landscape of metrics and methods to track trust-related concepts—such as user satisfaction, transparency, and fairness—is varied and constantly evolving.

Trust is influenced by a wide range of factors, including a company’s reputation, transparency, customer service, and the reliability of its products or services. Given this complexity, it is difficult, if not misleading, to rely on a single automated method to calculate a “trust score” that would be universally applicable across different industries or contexts. While Thick Data suggests that qualitative research—such as ethnographic studies or in-depth interviews—provides deeper insights into trust-building than quantitative metrics alone, some forms of quantification can still be useful.

A more targeted approach can yield useful scores if the scope of investigation is narrowed. For example, instead of attempting to measure general perceptions of a brand, companies can monitor reactions on social media platforms to identify trends in public sentiment. Many metrics used in social media marketing and influencer marketing, such as Klout or Kred scores, quantify the influence of key individuals within networks. By identifying these influencers, brands can gain a sense of how information spreads and how public sentiment is shaped within certain communities.

Social Network Analysis (SNA) is a mathematical and visual approach that can be used to map relationships and influence flows within a network. SNA has been widely used to understand complex systems such as the spread of diseases or the flow of information across platforms like Facebook, X, and Instagram. According to Krebs (2006), SNA focuses on “the mapping and measuring of relationships and flows between people, groups, organizations, animals, computers, or other information/knowledge processing entities.” In this context, “nodes” refer to the individuals or groups, and “links” represent the relationships or information flows between them. SNA tools enable the identification of central nodes (influencers) who can play a pivotal role in shaping public opinion and trust.

Social media tools like Brandwatch, a platform focused on social listening and sentiment analysis, and Famebit (acquired by Google), which specializes in influencer marketing, use SNA principles to help brands measure the effectiveness of their campaigns and identify key individuals whose trustworthiness can have a ripple effect throughout a network. However, while these scores and tools help identify influential voices, they still lack a comprehensive framework for understanding how trust is formed or eroded in the broader context.

Trust Seals and Rankings

When measuring trust shifts from individuals to brands, many metrics take the form of rankings or “trust seals.” These rankings, like those produced by Forbes or Ethisphere’s World’s Most Ethical Companies, are easy to communicate and distribute, both digitally and through traditional media. Many brands proudly display these rankings or seals to signal to consumers that they are trusted entities. However, while these measures can provide valuable insights, it’s important to question the methodologies used in their creation, as well as the independence of the organizations conducting the assessments. Trust seals are often subject to commercial incentives, which may affect their credibility.

Multi-Dimensional Trust Metrics: From Algorithms to Advocacy

Over the years, researchers and companies have evolved from relying on simple algorithms and rankings to developing more complex, multidimensional concepts for measuring trust. One such metric is the Brand Advocacy Quotient (BAQ), developed by Nielsen Online in 2009. This measure combines consumer survey data and social media buzz to gauge the degree to which consumers promote or erode a brand’s reputation. Similar tools are offered by companies like Young & Rubicam (Brand Equity Monitor) and Fjord (Love Score), which track consumer sentiments and advocacy behaviors. These indices are more sophisticated but also more costly to administer, as they rely on both quantitative and qualitative data.

While these advanced models can provide in-depth insights, they are not always practical for all businesses. For companies seeking a more straightforward, cost-effective approach, the Net Promoter Score (NPS) remains one of the most widely adopted and powerful tools to measure digital trust. Introduced by Fred Reichheld in 2003, NPS asks a simple question: “On a scale of 0 to 10, how likely are you to recommend this company/brand to a friend or colleague?” The score is calculated by subtracting the percentage of detractors (those who score 0-6) from promoters (those who score 9-10). This simple yet powerful metric provides an easily understandable, actionable gauge of customer loyalty and advocacy, two key drivers of trust.

Combining Multiple Metrics for a Holistic Approach

Given the complexity of trust and the various ways it can be cultivated or eroded, companies need to identify a set of relevant metrics and Key Performance Indicators (KPIs) that align with their specific business objectives. These metrics should capture both direct and indirect signals of trust. For example, while NPS is a powerful tool for measuring customer advocacy, brands may also benefit from integrating insights from more complex metrics like Brand Equity Monitor or Brandwatch to track broader consumer sentiment, competitive performance, and the effectiveness of specific trust-building initiatives.

The future of trust measurement lies in combining simple, actionable metrics like NPS with richer, more qualitative insights derived from sources like social media analysis, customer feedback, and behavioral economics.

Additionally, AI-driven sentiment analysis and behavioral tracking are becoming increasingly important in understanding how trust is built or destroyed in real-time. For example, AI tools that analyze consumer sentiment through text mining and sentiment analysis can help track changes in public perception and allow brands to adjust their trust-building strategies accordingly. By understanding how users interact with digital touchpoints through behavioral tracking, brands can make data-driven decisions that enhance trust, engagement, and customer loyalty. However, it is important to ensure that behavioral data is interpreted with care, using it alongside other methods to gain a full understanding of how trust is being formed and maintained in the digital ecosystem. In the context of digital trust, behavioral tracking is particularly valuable because it allows companies to observe actual behaviors rather than relying solely on self-reported data, which can be biased or incomplete. By understanding the ways in which users engage with digital touchpoints, companies can assess whether their actions, policies, and messaging align with the expectations necessary to build and maintain trust.

To effectively measure and monitor trust in the digital environment, a variety of behavioral tracking techniques can be employed, ranging from engagement and conversion analysis to advanced tools like predictive analytics and heatmaps:

User Engagement and Interaction Patterns

What it tracks:

Behavioral tracking can observe metrics such as click-through rates (CTR), time spent on site, scroll depth, navigation paths, and bounce rates. These interactions indicate whether users feel comfortable and engaged with a brand or website.

How it measures trust:

If users spend more time on a site, return frequently, or explore multiple pages, it can be interpreted as a sign that they trust the brand enough to engage deeply. A high bounce rate, on the other hand, might signal skepticism, a lack of trust, or frustration with the website or its content.

Transaction and Conversion Rates

What it tracks:

Tracking users’ decision-making processes—whether they add items to their cart, proceed to checkout, or complete purchases—can provide important signals. Conversion events, such as sign-ups or purchases, reveal whether a user feels confident enough to take a desired action.

How it measures trust:

Low conversion rates or abandoned transactions might suggest a lack of trust, possibly related to concerns about security, pricing transparency, or product quality. On the other hand, high conversion rates and completed transactions indicate that users trust the platform enough to share personal data or make purchases.

User Feedback Loops and Sentiment Analysis

What it tracks:

Behavioral tracking tools can measure how users respond to surveys, prompts, and calls to action (such as asking for feedback on a product or service). Analyzing user responses through sentiment analysis or emotion detection can provide valuable insights.

How it measures trust:

Positive feedback, reviews, or repeated actions (like referring a friend) are strong indicators of trust. If users regularly give favorable ratings or respond positively to prompts (e.g., “How likely are you to recommend us?”), it suggests that they feel secure in their relationship with the brand. Negative feedback or a reluctance to engage with feedback requests may signal distrust or dissatisfaction.

Retention and Loyalty Metrics

What it tracks:

Behavioral tracking can follow users over time to track their retention rates, churn rates, and repeat visits. Long-term patterns, like returning users or members, are key indicators of trust.

How it measures trust:

High retention rates suggest that users trust the brand enough to continue engaging over time. Conversely, if users leave after an initial interaction or never return, it could indicate that trust was never fully established or was lost during the interaction. Programs like loyalty rewards can also help measure trust as repeat engagements are often tied to users feeling valued and secure in their relationship with the brand.

Authentication and Security Behavior

What it tracks:

Tracking user actions during login processes, password resets, multi-factor authentication (MFA) interactions, and security-related prompts helps gauge confidence in security measures.

How it measures trust:

If users frequently engage with security features (such as enabling MFA or resetting passwords), it may reflect trust in the brand’s commitment to keeping their data safe. However, a large number of failed login attempts or users bypassing security steps can indicate security concerns or lack of trust in the system’s safety features.

Clickstream Analysis and User Journeys

What it tracks:

Clickstream analysis records the paths users take as they move through a website or application. This includes which pages or elements they interact with, where they click, and how they navigate through content or forms.

How it measures trust:

Trust can be inferred based on users’ willingness to explore deeper content, fill out forms, or engage with complex processes (like long registration forms). If a user immediately leaves a page or doesn’t follow through with intended actions, it could suggest that they lack trust in the platform, possibly due to concerns about privacy, relevance, or ease of use.

Social Sharing and User Advocacy

What it tracks:

Behavioral tracking tools can measure whether users share content, recommend products, or participate in referral programs (e.g., “Refer a friend for a discount”).

How it measures trust:

Users who share content or actively recommend a brand to others are demonstrating high levels of trust. Brand advocacy is often a clear sign of trust, as individuals who share a product or service with their network are vouching for its credibility and quality.

Content Consumption Patterns

What it tracks:

Tools can track how users consume content on a website, such as video plays, article reads, and engagement with interactive content like quizzes, polls, or tutorials.

How it measures trust:

If users engage deeply with educational or informative content (e.g., reading blog posts, watching tutorial videos), it suggests that they trust the brand enough to invest time in learning more about its products, services, or values. A high level of engagement with “trust-building” content (e.g., transparency reports, user reviews, or security policies) can indicate that users are seeking reassurance and transparency, which fosters trust.

Advanced: Predictive Analytics and Machine Learning

How it works:

By leveraging historical behavioral data, machine learning models can predict which users are likely to trust a brand in the future based on their past behavior. For example, if a user repeatedly engages with trust-related content (like reading privacy policies or checking reviews), predictive models can flag them as a high-trust prospect.

How it measures trust:

By analyzing patterns, businesses can proactively adjust their messaging, security features, or user experience to improve trust for users who may be on the verge of abandoning the brand or engaging in a suspicious activity.

Advanced: Heatmaps and Visual Tracking

What it tracks:

Heatmaps show where users are clicking, scrolling, and engaging on a webpage. Visual tracking tools can capture user attention, such as whether they focus on security-related elements (e.g., a padlock icon in a payment form) or trust signals (e.g., badges or certifications).

How it measures trust:

If users consistently interact with or focus on areas that signal trust (such as security features or testimonials), it can suggest that they prioritize trust-related elements in their decision-making process.

While behavioral tracking provides valuable insights into user actions and interactions, it is important to recognize that behavioral data is not always conclusive in terms of measuring trust. Users may behave in ways that are influenced by factors unrelated to trust, such as marketing pressure, time constraints, or other external influences. Additionally, privacy concerns and data protection regulations (such as GDPR) require that businesses balance behavioral tracking with transparent practices and informed consent.

Furthermore, behavioral tracking should not be used in isolation but rather as part of a broader approach to understanding trust. Combining it with qualitative feedback (such as surveys or user interviews) provides a more comprehensive picture of why trust is being built or eroded.

Companies need to identify an individual set of metrics and Key Performance Indicators (measurable values that demonstrates how effectively a company is achieving key business objectives) in order to understand the impact of their initiatives and eventually to engender trust. This can result in an approach that leverages both, the advantages of the NPS and the richness of insights provided by a periodical measurement such as the Brand Equity Monitor.

While no single service or index can fully capture the trustworthiness of a brand, company, or AI model in every context, a combination of these tools can provide a robust framework for monitoring and measuring trust. For brands and companies, services like Trustpilot, RepTrak, NPS, and CSR Rankings are commonly used, while AI models benefit from tools like AI Explainability frameworks and Fairness Indicators. By leveraging a mix of these indexes and monitoring platforms, organizations can gain a clearer picture of their trust profile and improve their standing over time.

In the digital age, trust is central to success for online businesses and AI systems. Measuring trust effectively requires a multi-dimensional approach that combines self-reported perceptions, behavioral data, and more technical metrics like performance and security. Monitoring trust should be an ongoing process, integrating real-time feedback, behavioral patterns, and transparency initiatives.

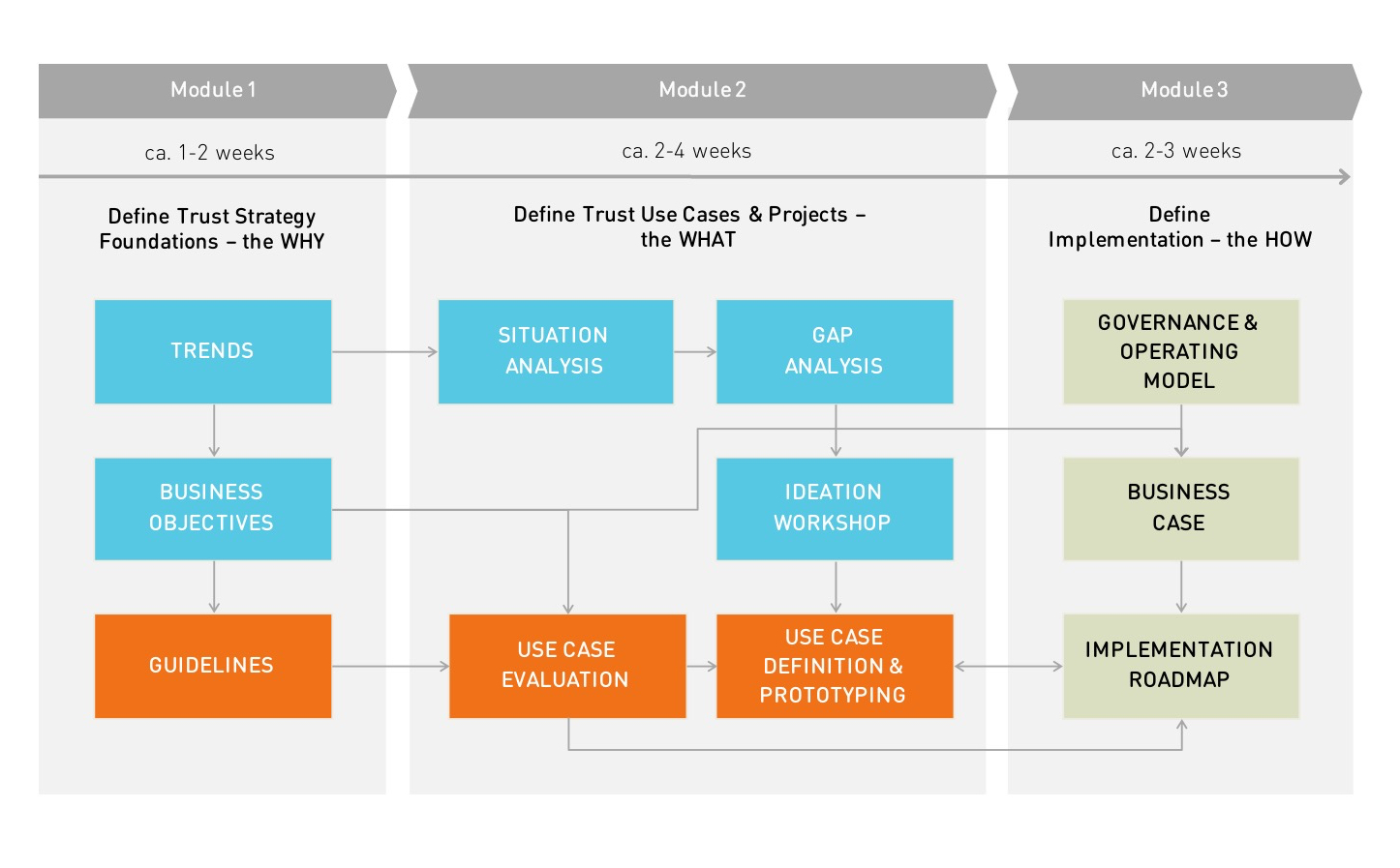

The three capabilities that are essential to effectively deal with digital trust can be built in a partly sequential approach. The project plan shown in the picture bellow illustratively outlines single activities required. The colors used map to the capabilities discussed. The recommended project approach to engendering digital trust first tries to answer the question “why” the organisation needs to act, then identifies “what” needs to be done and ultimately defines “how” the organisation needs to operate. These activities can be clustered into three modules. Depending on the complexity of the case at hand, the nature of the initiatives identified and the resources available the approach requires a timeframe of about six weeks to three months.

It is in the nature of things that the digital economy relies on consumer data. access to data will become more and more critical, and consumer trust is the key that will unlock it. The digital revolution with its explosive growth of data and system complexity is about to transform industries again. In order to harness the potential of data, the digital economy and its data driven business models must embark on a journey towards trust-based customer relationships.

It all starts with a better understanding of digital trust.

References Chapter 7:

Amit, R., & Zott, C. (2001). Value creation in e-business. Strategic Management Journal, 22(6–7), 493–520. https://doi.org/10.1002/smj.187

Bächler, M., et al. (2020). Smart Use Case Picking: A Hands-On Approach for a Successful Integration of Machine Learning in Production Processes. Procedia Manufacturing, 51, 1311–1318. https://doi.org/10.1016/j.promfg.2020.10.184

Bouhouras, A. S., Labridis, D. P., & Bakirtzis, A. G. (2009). Artificial neural network evaluation of high customer benefit installations for various types of distribution system customers. IET Generation, Transmission & Distribution, 3(6), 529-538.

Bowman, C., & Ambrosini, V. (2007). Firm value creation and levels of strategy. Management Decision, 45(3), 376–387. https://doi.org/10.1108/00251740710745028

Brandenburger, A. M., & Nalebuff, B. J. (1995). The right game: Use game theory to shape strategy. Harvard Business Review, 73(4), 57–71.

Brown, T., & Katz, B. (2019). Change by design: How design thinking transforms organizations and inspires innovation. HarperBusiness.

Chapman, P. (2000). CRISP-DM 1.0: Step-by-step data mining guide. Available: https://www.semanticscholar.org/paper/CRISP-DM-1.0%3A-Step-by-step-data-mining-guide-Chapman/54bad20bbc7938991bf34f86dde0babfbd2d5a72 [2025, January 31]

Gefen, D., Karahanna, E., & Straub, D. W. (2003). Trust and TAM in online shopping: An integrated model. MIS Quarterly, 27(1), 51–90. https://doi.org/10.2307/30036519

Lee, M. K., et al. (2022). WeBuildAI: Participatory framework for algorithmic governance. Proceedings of the ACM on Human-Computer Interaction, 3(CSCW), 1–35. https://doi.org/10.1145/3359283

McKnight, D. H., Choudhury, V., & Kacmar, C. (2002). Developing and validating trust measures for e-commerce: An integrative typology. Information Systems Research, 13(3), 334–359. https://doi.org/10.1287/isre.13.3.334.81

Morgan, R. M., & Hunt, S. D. (1994). The commitment-trust theory of relationship marketing. Journal of Marketing, 58(3), 20–38. https://doi.org/10.2307/1252308

Osterwalder, A., Pigneur, Y., Bernarda, G., & Smith, A. (2014). Value proposition design: How to create products and services customers want. Wiley.

Porter, M. E. (1985). Competitive advantage: Creating and sustaining superior performance. Free Press.

Schäfer, F., Mayr, A., Schwulera, E., & Franke, J. (2020). Smart Use Case Picking with DUCAR: A Hands-On Approach for a Successful Integration of Machine Learning in Production Processes. Procedia Manufacturing, 51, 1311–1318. https://doi.org/10.1016/j.promfg.2020.10.184

Sutherland, J. (2014). Scrum: The art of doing twice the work in half the time. Random House.